Spurred by the current lack of a Linux SDK for the Oculus Rift DK2, a group of developers sought to dissect the Rift’s optical tracking system by reverse engineering the DK2. The information that was tediously corralled together is quite technical so we’ve have rounded up the critical focal points in an easier to read format to show why this community project is important.

A big hat tip to Brad Davis and Oliver Kreylos who spearheaded the reverse engineering effort. Davis got the ball rolling by discovering the LED activation codes and sharing his initial findings with others. Kreylos contributed by helping to correct the camera’s native view, among other things, and also helpfully sorted out the key information on his blog from the collaborative effort that Davis began in a thread in the Oculus sub-section of Reddit.

Davis is a software development engineer at Amazon and the maintainer of the community version of the Oculus VR SDK on GitHub. He’s also publishing a book on the Oculus Rift that offers a technical deep dive into the VR headset.

Kreylos is a PhD virtual reality researcher who works at the Institute for Data Analysis and Visualization, and the W.M. Keck Center for Active Visualization at the University of California, Davis.

See Also: VR Researcher Flips the Script, Augments Virtual Reality with Actual Reality

Here is what’s been gleaned so far:

The Tracking LEDs Can Be Triggered and Gathered with Specific Code

When figuring out how the camera interfaces with the head mounted display, several mysterious functions of the DK2 were retrieved.

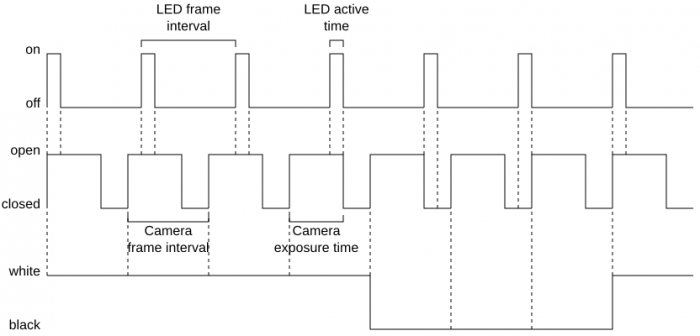

Sending a Human Interface Device (HID) feature report turns on the LEDs for ten seconds and then causes them to flicker in odd ways. At first, the community was unsure why this was the case and wildly speculated about it online. Later, it was discovered by Github user pH5 that each LED broadcasts at specific flashing frequencies with various levels of brightness. This allows the tracking camera to identify the source based on the exact blinking patterns of the lights. Knowing this, the DK2 camera can be programmed with an LED identification algorithm.

Synchronization of the Headset and Camera is Achieved after Fixing a Few Problems

The tracking camera is a standard USB webcam with an infrared filter that is utilized to pick up the blinking frequencies of the LED lights in the DK2. Digging into the firmware of the camera showed an issue that surfaced (somewhat unexpectedly) which had to do with a software bug that was deeply embedded into the system. The device itself was advertising that it had a resolution of 376×480 pixels when in fact the camera was set at 752×480 pixels. Without correcting this problem, the camera image was stretched and distorted.

Hooking up the camera and VR headset indicated that the LEDs strobed at a similar frequency, but not identical, to the tracking camera’s frame rate. This manifested an irregular beat pattern that was most likely unintentional. This meant that the synchronization wasn’t enabled by default and had to be manually corrected.

This is where the knowledge of pH5 was used. By writing to the registers within the camera’s imaging sensor, the shutters could be opened at the exact time a synchronization pulse was received over the connecting cable. Sending commands directly to the camera gave full control over the exposure, gain, black level, and image flipping functions as well.

Lens distortion for the camera was also an issue that needed to be corrected. Because of the camera’s relatively large field of view, the device suffered from a few calibrations problems. This was addressed by removing the IR filter and testing out the possible intrinsic parameters of the lens. After calculating the distortion formula, the image capture of the camera was modified producing a better view.

Extracting the LED Positions Opens Up a Whole New World of Input Possibilities

Unlocking the camera tracking capabilities could open the door for tracking other input devices. Custom wearable accessories with infrared lights embedded would be able to be picked up the system. Modifying ‘raver gloves‘ for VR use, for example, could provide additional inputs for the virtual reality experience and induce greater levels of presence.

See Also: The Incredible Performance of the Oculus Rift DK2′s Positional Tracking (video)

There is Still More to Understand

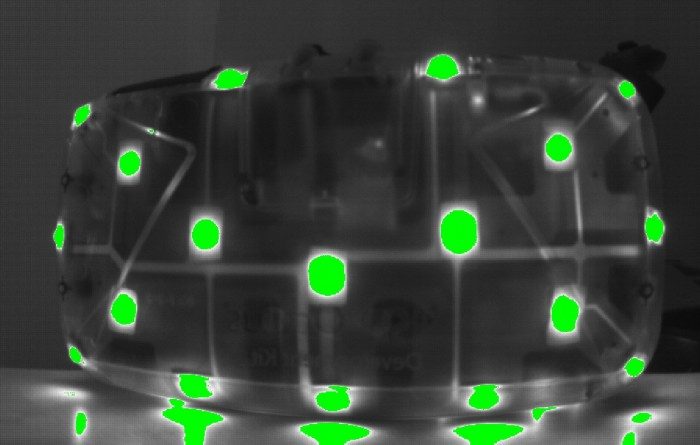

Thanks to the collective work of the VR community online, many of the mechanisms behind the Oculus Rift DK2’s positional tracking have been uncovered. The LEDs can be turned on and tracked with the DK2’s camera; signals and commands are able to be sent directly to both the devices allowing for full control over the system; a pose algorithm has also been developed which traces IR blobs in image space over time.

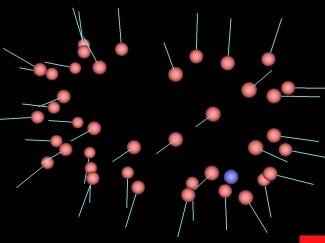

However, there is still much more to learn. For example, when extracting the 3D positions of the LEDs in the headset, it turns out that the lights are not symmetrical. It has been proposed that this is a calibration issue that surfaces during the manufacturing process. Additional testing of other VR headsets will show whether each DK2 is truly factory-calibrated, or if all the devices contain the exact position data. The 3D pose algorithm, although initially prototyped, will need to be fine-tuned as well.

A video by Brad Davis shows initial LED identification after passing the camera input through an image processor to eliminate everything from the frame except for the LEDs.

Overall though, the developers were able to come together to help each other understand how the tracking system works. These findings are giant leaps towards better documentation of the Rift which allows those interested in creating VR experiences to see how everything fits into place. This community project is sure to inspire others to dig deeper and share their findings with the world.