Motion capture techniques are used throughout the VFX industry, but a new project from Mo-cap specialist and developer Jasper Brekelmans aims to bring live, augmented reality previews for motion capture performance, unlocking the ability to preview dances in realtime overlaid onto reality.

Visual Effects in movies and television now use motion capture, the process of digitally recording a human actor’s movements, as a matter of course throughout the industry these days. But developer Jasper Brekelmans is experimenting with Microsoft’s augmented reality visor HoloLens as a way to give directors and performers ways to see these digital performances overlaid onto reality in realtime.

Last time we checked in with Brekelmans, he was demonstrating his use of the HoloLens to build an AR integration with Autodesk’s popular MotionBuilder character animation studio.

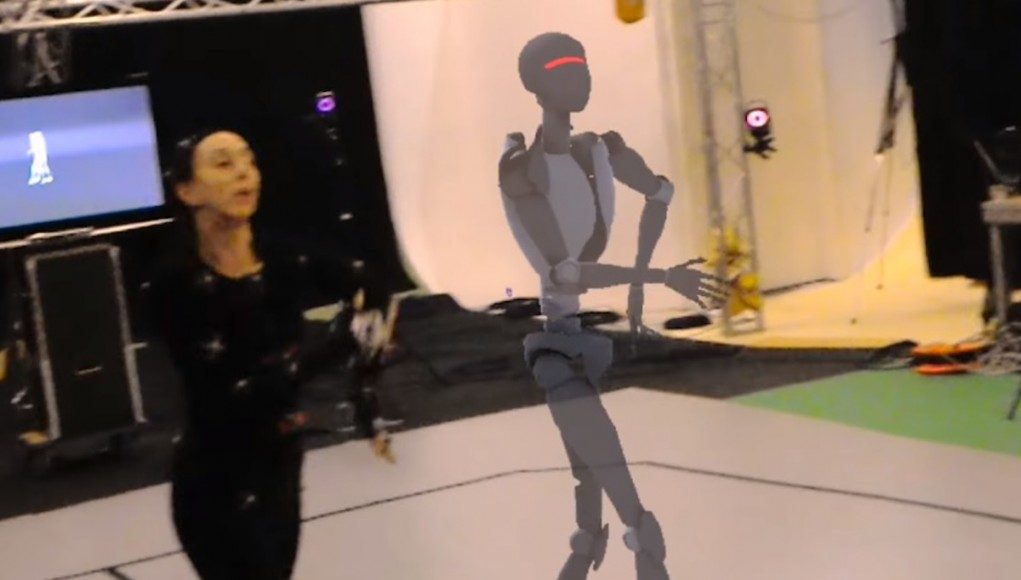

Brekelmans is now working with a group called The WholoDance project, an organisation which is dedicated to capturing digitally the art of dance for both cultural and research purposes. Teaming up with Motek Entertainment, who provide the Motion Capture skills and facilities, Brekelmans has managed to build a way to wireless stream the motion capture data as it’s being generated, and stream that data wirelessly to a HoloLens visor which then generated an augmented avatar mimicking the dancer’s movements overlaid onto reality – all tracked in 3D space.

For Brekelmans it was a natural evolution from his prior work. He used the technology for streaming data from Autodesk’s MotionBuilder running on a desktop computer to the Microsoft HoloLens which allowed the visualisation a live-size 3D character in realtime on the motion capture stage.

“The first thing we noticed when giving the HoloLens to a dancer was that they were instantly mesmerized,” says Brekelmans, “Right after that they immediately started analyzing their own performance, much like professional athletes do with similar analyzing equipment. Nuances of how the hips moved during balancing or how footwork looked for example became much more apparent and clear when walking around a live-size 3D character in motion than watching the same thing on a 2D screen.”

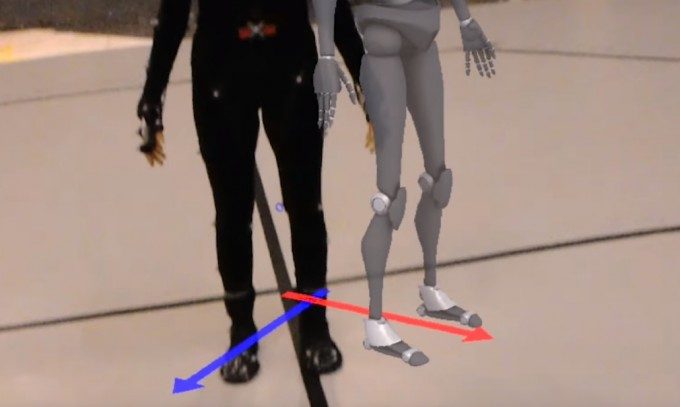

An XBox controller was used to provide the user with various controls like offsetting the 3D avatar in the physical space, toggling the various different visualizations and tuning visual parameters. Due to the amount of control needed the team opted for a “known device” instead of relying on the gaze, air tap and voice commands commonly used with the HoloLens.

“Even though the HoloLens is specifically designed for projecting holograms further away from the user we did experiment with the performer wearing the device while being tracked,” says Brekelmans, “This would allow them to see graphics overlayed on their hands and feet for example. The display technology wasn’t ideal for this purpose (yet) but it did give us things to experiment with to form new ideas for the future. ”

So where might this experimental technique lead in the future? “… we would love to play with other devices, including ones that are tethered to a desktop machine utilizing the full power of high-end GPUs. As well as use it in a Visual Effects context, visualizing 3D characters, set extensions and such in the studio or even on location.”