Czech developer iNFINITE Production has released UVRF – a free, cross-platform template for hand presence in VR. The open-source demo offers a framework for use in any Unreal Engine project, as well as a ‘Playground’ scene containing an underground bunker and shooting range to showcase hand interactivity.

Detailed in a post on the Unreal Engine VR developer forum, UVRF’s framework aims to be a useful starting point for implementing hand presence in an Unreal-based VR experience, offering 17 grab animations to cover most objects, per-platform input mapping and logic, basic haptics, teleport locomotion using NavMesh (with rotation support on Rift), touch UI elements, and several other useful features. The framework is released under the CC0 license, meaning it can be used by anyone without restriction.

In a message to Road to VR, Jan Horský at iNFINITE Production explained how this template could be particularly useful to new developers. “While Unreal does very good job at making development accessible, building hands that properly animate, are properly positioned, with grabs and throws that feel natural and so on, is still not a trivial task,” he writes. “While it’s not a problem for experienced dev teams, it is a problem for newcomers. And they’re the ones that are likely to have ideas that will surprise us all. This little demo is an attempt to make VR development easier for them.”

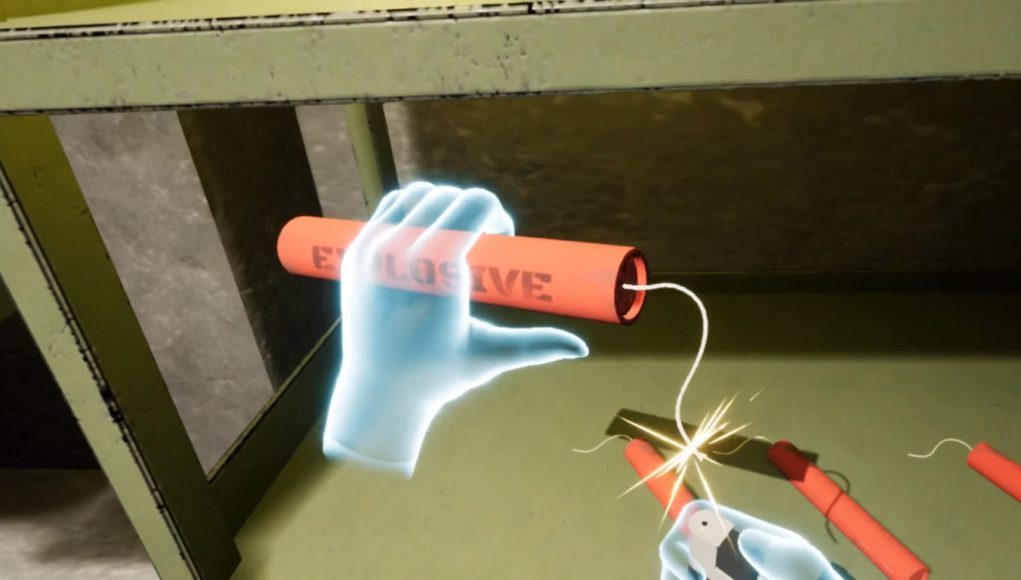

The included ‘Playground’ demo shown in the video features a functional shooting range in an underground bunker, littered with magazines to show the multi-object interaction of reloading a gun, along with many other features to highlight the hand animations.

Originally developed as an internal tool for prototyping at iNFINITE Production, the team decided to kindly share it with the world. “I expected such a project would come from companies that are more interested in VR growth like Oculus, Valve, or HTC,” says Horský. “It’s nearly a year since Touch was released and there is still no such thing publicly available, so we decided to take it into our own hands.”

You can download to the template here.