At CES 2017, Lumus is demonstrating its latest waveguide optics which achieve a 55 degree field of view from optics less than 2mm thick, potentially enabling a truly glasses-sized augmented reality headset.

Israel-based Lumus has been working on transparent optics since 2000. The company has developed a unique form of waveguide technology which allows images to be projected through and from incredibly thin glass. The tech has been sold in various forms for military and other non-consumer applications for years.

But, riding the wave of interest in consumer adoption of virtual and augmented reality, Lumus recently announced $45 million in Series C venture capital to propel the company’s technology into the consumer landscape.

“Lumus is determined to deliver on the promise of the consumer AR market by offering a range of optical displays to several key segments,” Lumus CEO Ben Weinberger says.

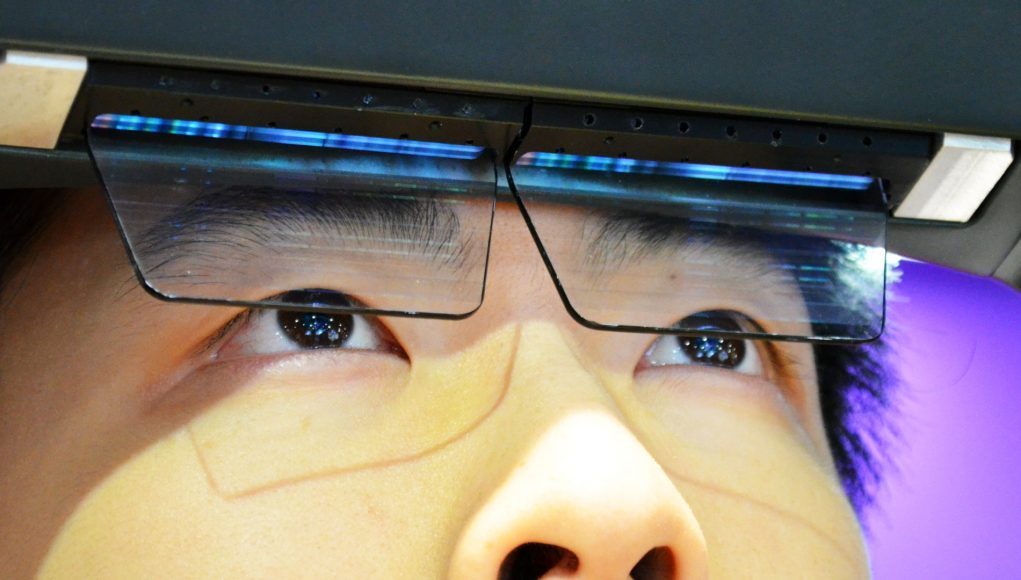

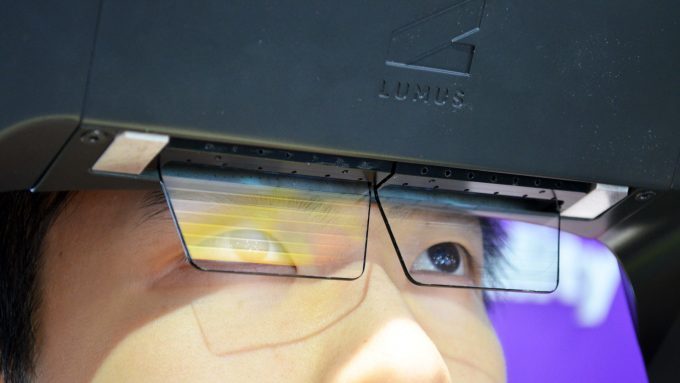

This week at CES 2017, Lumus was showing off what they’re calling the Maximus, a new optical engine from the company with an impressive 55 degree field of view. For those of us used to the world of 90+ degree VR headsets, 55 degrees may sound small, but it’s actually been difficult to achieve that level of field of view in a highly compact optical system. Meta has a class-leading 90 degree field of view, but requires sizeable optics. Lumus’ 55 degree field of view comes from a sliver of glass less than 2mm thick. Crucially, you can also get your eyes very close to the Maximus optics, potentially enabling truly glasses-sized augmented reality headsets.

This week at CES 2017, Lumus was showing off what they’re calling the Maximus, a new optical engine from the company with an impressive 55 degree field of view. For those of us used to the world of 90+ degree VR headsets, 55 degrees may sound small, but it’s actually been difficult to achieve that level of field of view in a highly compact optical system. Meta has a class-leading 90 degree field of view, but requires sizeable optics. Lumus’ 55 degree field of view comes from a sliver of glass less than 2mm thick. Crucially, you can also get your eyes very close to the Maximus optics, potentially enabling truly glasses-sized augmented reality headsets.

Looking Through the Lens

Unlike some of the company’s other optical engines which were shown integrated into development kit products, the Maximus was mounted in place and offered no chance to see any sort of tracking (though Lumus primarily in the optical engine, not entire AR headsets).

Stepping up to the rig and looking inside, I saw an animated dragon flying through the air above the convention floor. The view was very sharp, and for an AR headset, felt like there’s some immersive potential. However, the contrast didn’t seem great, with bright white areas appearing blown out. The image also had a silvery holographic quality to it. This may mean a lack of dynamic range, or that the display was not adjusting for ambient light in this demonstration. The brightness of the Maximus optical engine seems among its strong qualities, as even without adding any dimming lenses to cut back on ambient light, the image was bright and clear. Ultimately I was very impressed by the capabilities of the Maximus optical engine. Assuming there’s no major flaws to the display system, this waveguide technology seems like it could be a foundation for extremely compact AR glasses, similar in size to regular spectacles (and that’s something the AR industry has been attempting to achieve for some time now).

The image I saw in the Maximus was 1080p, quite sharp at the 55 degree field of view, though Dr. Eli Glikman said that the resolution is limited only by the microdisplay that feeds the image to the optics. With a higher resolution microdisplay (such as Kopin’s new 2k x 2k model perhaps), there’s great opportunity to scale image fidelity here.

The image I saw in the Maximus was 1080p, quite sharp at the 55 degree field of view, though Dr. Eli Glikman said that the resolution is limited only by the microdisplay that feeds the image to the optics. With a higher resolution microdisplay (such as Kopin’s new 2k x 2k model perhaps), there’s great opportunity to scale image fidelity here.

Glikman said that the Lumus Maximus still has about a year of R&D left before it’s ready to be productized, but says that partner companies this year will introduce product prototypes based on the Maximus.

Sleek Prototype

To prove that the company’s optical engines are capable of enabling glasses-sized AR headsets, Lumus was also showing a prototype headset they called ‘Sleek‘. It uses some of the company’s other optical engines and has a smaller field of view, but it’s made to show the impressively small form factor that these optics make possible.

To prove that the company’s optical engines are capable of enabling glasses-sized AR headsets, Lumus was also showing a prototype headset they called ‘Sleek‘. It uses some of the company’s other optical engines and has a smaller field of view, but it’s made to show the impressively small form factor that these optics make possible.

How it Works

It’s actually a pretty awesome feat of physics to channel light down a slim piece of glass and then get it to pop out of that glass when and where you need it.

It’s actually a pretty awesome feat of physics to channel light down a slim piece of glass and then get it to pop out of that glass when and where you need it.

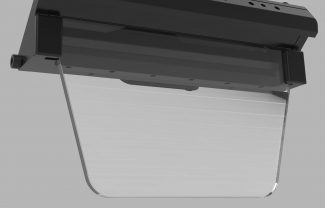

The Maximus optical engine, as seen at CES 2017, relied on the bulky electronics above the optics. There, a pair of (microdisplays which function as the light source of the optics) are housed. The image from each display is stretched and compressed to be emitted along the top of the lenses. From here it cascades down the optics and—from our understanding of Lumus’ proprietary technology—uses an array of prism-like structures in the glass to bounce certain sections of the injected light out toward the user’s eye. During that process, the image is reconstructed into that originating on the microdisplay (somewhat like the process of pre-warping visuals to cancel out the warping of a headset’s lenses).

With Lumus’ advances in waveguide optics, coupled with other impressive microdisplay advances seen at CES this year, it seems that practical everyday solutions for lightweight augmented realist hardware are rapidly approaching. CES 2018 may prove to be a fascinating milestone for augmented reality.