During the opening keynote to their annual Unite conference yesterday, Unity announced that their in-development VR authoring tool EditorVR, which allows creators to step inside and work on their projects using virtual reality, is on its way within the next couple of months. Here’s a video of the full demonstration which showed off the latest version.

At this year’s annual Unite conference, Unity once again were out to make it clear they they and their hugely popular 3D game engine were ‘all about VR’. To that end, they used a section of the conference’s opening keynote to give us the latest look at the engine’s virtual reality enabled editor, which allows creators to dive into their projects and build their games and apps immersively from within VR.

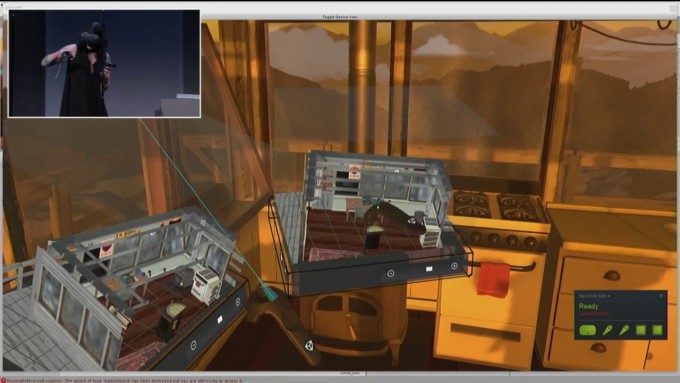

The VR authoring tool, which the company refers to as ‘EditorVR’ (or EVR for short), was originally shown at last year’s Unite conference using the Oculus Rift headset and Oculus Touch motion controllers, and also made a guest appearance at GDC earlier this year. At Unite 2016, Principal Designer Timoni West once again demonstrated live on stage (accompanied by Principal Engineer Amir Ebrahimi) Unity’s progress on EVR, this time using the HTC Vive.

West used a Unity project and assets from Campo Santos’ hit indie game Firewatch (no, there’s no VR version to announce as yet I’m afraid) to demonstrate how any project could be worked on from within VR. In fact, West made the point that non VR projects could benefit from the immersive editing experience, as it gives editors a feel for the scale of the worlds their building in real time.

There were many enhancements shown off in the demonstration and using the original Unite demonstration video as a comparison, the team have clearly focused hard on streamlining the user interface aspect, all the while stressing that everything you can do in the standard Unity, can be achieved in VR too. User interface panels looked much slicker than EVR’s initial showing and some impressive actions, such as physically dragging models between different, fully rendered virtual scenes in real time.

Of course, it’s still difficult to gauge whether the benefits and obvious wow factor of working in VR will result in a more effective workflow overall. I suspect long time Unity veterans will take some persuading to toss aside actions within the traditional UI which will likely be committed to muscle memory at this point. Nevertheless, it remains an important commitment to VR as a platform and a glimpse at ways in which immersive technology may affect the way we work in the future.