ARCore, Google’s developer platform for building augmented reality experiences, is getting an update today that aims to make shared AR experiences quicker and more reliable. Additionally, Google is also rolling out support for Augmented Faces on iOS, the company’s 3D face filter API.

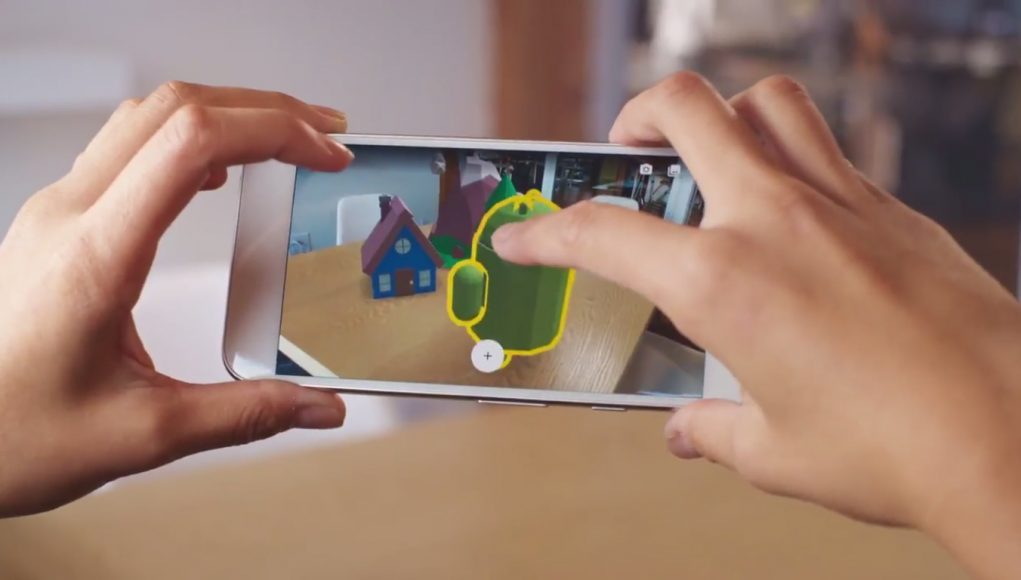

Introduced last year, Google’s Cloud Anchors API essentially lets developers create a shared, cross-platform AR experience for Android and iOS, and then host the so-called anchors through Google’s Cloud services. Users can then add virtual objects to a scene, and share them with others so they view and interact simultaneously.

In today’s update, Google says it’s made improvements to the Cloud Anchors API that make hosting and resolving anchors more efficient and robust, something the company says is due to improved anchor creation and visual processing in the cloud.

Google AR team product manager Christina Tong says in a blog post that developers will now have access to more angles across larger areas in the scene, making for what she calls a “more robust 3D feature map.”

This, Tong explains, will allow for multiple anchors in the scene to be resolved simultaneously, which she says reduces the app’s startup time.

Tong says that once a map is created from your physical surroundings, the visual data used to create the map is deleted, leaving only anchor IDs to be shared with other devices.

In the future, Google is also looking to further develop Persistent Cloud Anchors, which would allow users to map and anchor content over both a larger area and an extended period of time, something Tong calls a “save button” for AR.

This prospective ‘AR save button’ would, according to Tong, be an important method of bridging the digital and physical worlds, as users may one day be able to leave anchors anywhere they need to, attaching things like notes, video links, and 3D objects.

Apps like Mark AR, a graffiti-art app developed by Sybo and iDreamSky, already uses Persistent Cloud Anchors to link user-made creations to real-world locations.

If you’re a developer, check out Google’s guide to creating Cloud Anchor-enabled apps here.