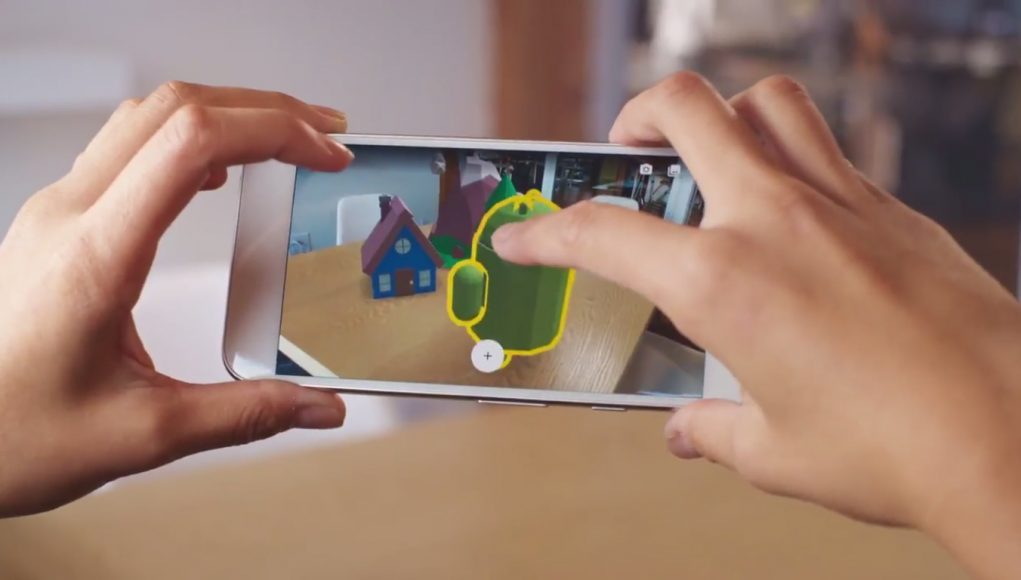

Ahead of Mobile World Congress (MWC) next week, Google announced it’s bringing ARCore, it’s augmented reality SDK, out of preview and to a number of flagship smartphones in its 1.0 version. Google Lens, the company’s tool to help users identify real-world objects, is also making its way from Pixel to more flagship devices soon – albeit still in preview.

Anuj Gosalia, Director of Engineering at the company’s AR team, says ARCore 1.0 will also launch alongside the ability for developers to publish AR apps to the Play Store. Gosalia maintains ARCore “works on 100 million Android smartphones, and advanced AR capabilities are available on all of these devices.”

Here’s the 13 different models ARCore is supporting now:

- Google’s Pixel, Pixel XL, Pixel 2 and Pixel 2 XL

- Samsung Galaxy S8, S8+, Note8, S7 and S7 edge

- LG’s V30 and V30+ (Android O only)

- ASUS’s Zenfone AR

- OnePlus’s OnePlus 5

Google says they’re partnering with manufacturers to bring ARCore to other devices moving forward, including upcoming phones from Samsung, Huawei, LGE, Motorola, ASUS, Xiaomi, HMD/Nokia, ZTE, Sony Mobile, and Vivo.

Once Google throws the switch, you’ll be able to download ARCore here.

ARCore 1.0 also comes along with a few new features Google says will add “additional improvements and support to make [the] AR development process faster and easier.” ARCore 1.0 is said to feature improved environmental understanding that lets you place virtual assets on irregularly shaped surfaces such as couches, tree branches, and pillows.

Google showcased a few AR experiences coming soon, including one from Snapchat showing off an immersive portal to FC Barcelona’s Camp Nou stadium, room visualizations from Sotheby’s International Realty, Porsche’s Mission E Concept vehicle, OTTO AR’s furniture app, and Ghostbusters World, which you can see in action below.

Snapchat’s FC Barcelona AR experience will launch in mid-March to all Snapchat users globally through Snapcode.

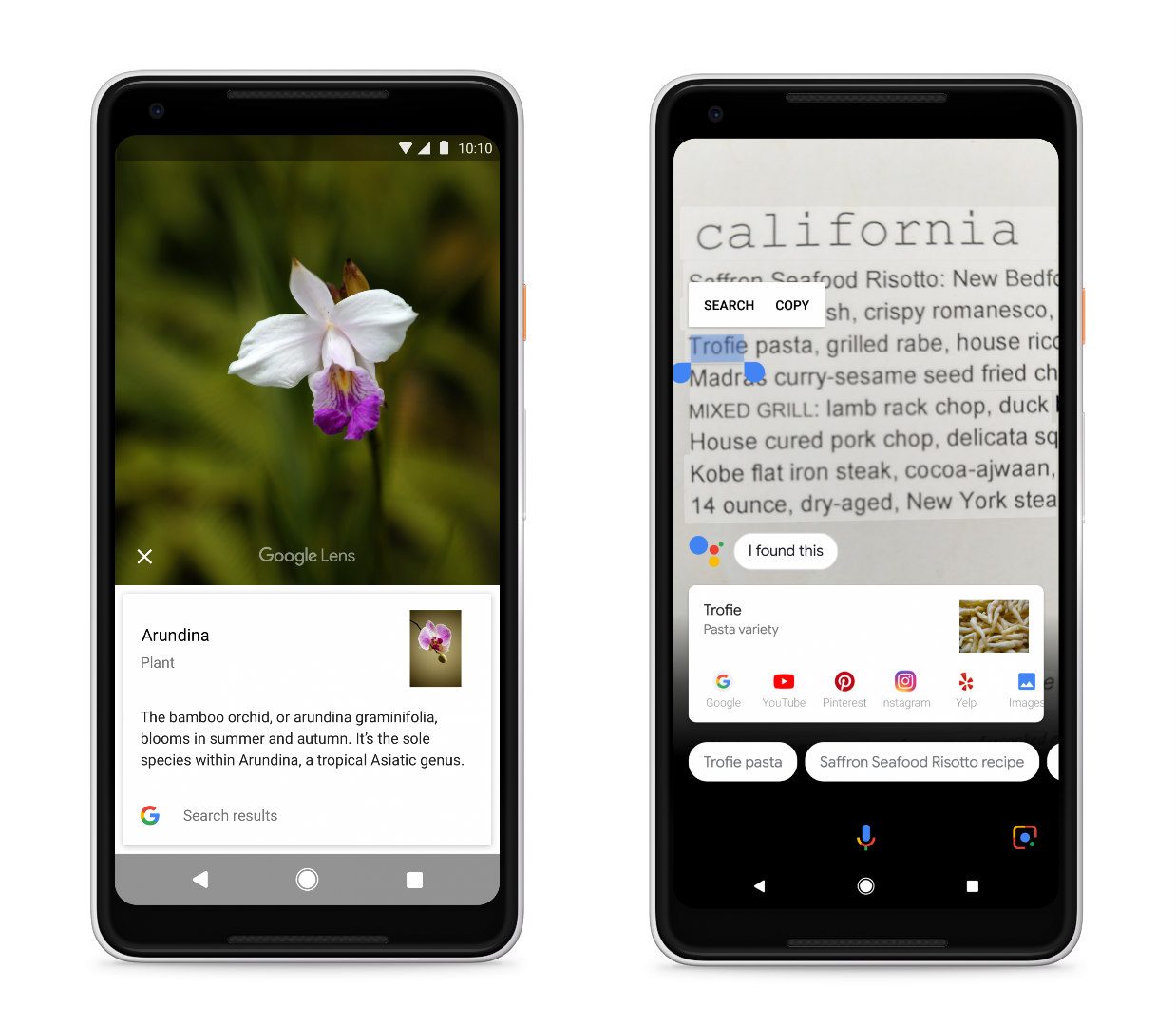

Google Lens—which can be used for image detection such as saving a phone number or address to a contact from a business card, or getting details about a physical book, landmark or painting in a museum—is also making its way to many more devices since it was announced late last year.

Google Photos internal Lens function is coming to all English-language users with the latest version of the app on Android and iOS 9 and newer.

The Lens function in Google Assistant, which was previously only available on Pixel devices, will be arriving to English-language users on supported devices “over the coming weeks.” Flagship devices from Samsung, Huawei, LG, Motorola, Sony, and HMD/Nokia will get the camera-based Lens experience within Google Assistant. The company plans to bring support for more devices in the future.

Google Lens since launch has seen updates allowing text selection features and the ability to create contacts and events from a photo. In the coming weeks, Google plans to improve Lens’ ability to recognize common animals and plants, like different dog breeds and flowers.