HoloLens development kits started shipping last week following Microsoft’s Build conference and there were also a number of NDAs that were lifted, which allowed some companies to start talking about their HoloLens projects for the first time. One of those companies was Portland’s Object Theory, which was founded in July 2015 after HoloLens engineer Michael Hoffman left Microsoft Studios to co-found Object Theory with serial entrepreneur Raven Zachary, who sold his previous iPhone app development company to Walmart Labs.

HoloLens development kits started shipping last week following Microsoft’s Build conference and there were also a number of NDAs that were lifted, which allowed some companies to start talking about their HoloLens projects for the first time. One of those companies was Portland’s Object Theory, which was founded in July 2015 after HoloLens engineer Michael Hoffman left Microsoft Studios to co-found Object Theory with serial entrepreneur Raven Zachary, who sold his previous iPhone app development company to Walmart Labs.

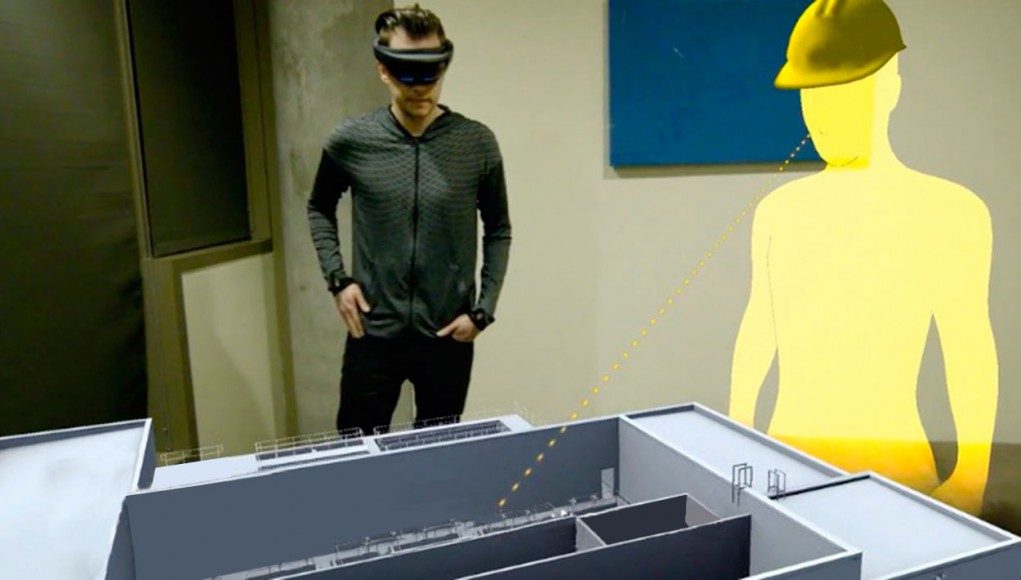

I had a chance to do 90 minutes worth of HoloLens demos on Friday, and then talk with Raven about their mixed reality collaboration service and early HoloLens client work with CDM Smith. We talked about AR vs VR, designing around HoloLens’ relatively small field of view, and why he decided to exclusively focus on developing enterprise applications for the HoloLens.

LISTEN TO THE VOICES OF VR PODCAST

One of the biggest complaints the people have about the HoloLens is the relatively small field of view. Raven claims that after extensive use of the HoloLens that eventually the brain adapts to this small field of view and forgets about it. This may be true if the developers design around the field of view limitations, but I found myself frustrated with the limited field of view after going through about 90 minutes worth of the latest HoloLens demos that shipped last week. I found that I had to continually pan my head around a scene in order to see everything or constantly take a step backwards and reposition myself so that I wasn’t too close to an augmented character or object of interest. It not only breaks immersion, but it can be physically fatiguing.

Raven said that early AR developers will have to design around these limitations until the field of view improves. Object Theory has learned that using a miniaturized tabletop scale or placing objects at an adequate distance can help minimize the limitations of the field of view, and they claim that most of the complaints are coming from the tech press or experienced VR developers while most new immersive tech users don’t seem to be too bothered by it.

It’s also inevitable that the field of view will improve over time, and so it’s just part of the initial limitations of the first iteration of a completely untethered mobile computing platform. The HoloLens’ ability to both do positional tracking and keep track of your relative position within a room is actually extremely impressive, especially when you start to look at their world-locking features which allow you to completely walked around holograms that you place in the room. Being able to orient yourself around virtual objects within your physical surroundings is both compelling and will have countless applications once the computer vision technology gets to the point of being able to identify and augment specific objects. Raven says that he expects the HoloLens to be the primary user interface to all of your Internet-Of-Things connected devices.

The HoloLens is also able to detect and keep track of multiple rooms, which starts to open up features and functionality that go beyond Vive’s room-scale capabilities. You don’t have to worry about completely clearing out your space, but you can do a high-resolution scan of all of the furniture and obstacles and the content will adapt to your environment. It was liberating to be able to roam around untethered in an entire room beyond the Chaperone constraints of the Vive, and still have a social connection to other people within the room.

While most of the initial demos were focused on scanning a single room, it does have the capability to recognize what room you’re in meaning that at some point you’ll be able to walk through your entire house and be able to interact with augmented reality stories and games.

The most impressive demo that I saw was Fragments, which is one of the first number of mixed reality experiences shipping with the HoloLens development kits. It’s a story-driven mystery game where clues from recovered memories are displayed to you overlaid your room after you do a high-resolution scan. I found myself immersed in finding the hidden objects, and then applying a number of different filters which helped me isolate the victim’s location across a number of different cities. The mixed reality experience was by itself novel and interesting, and the game component of having a story that you were trying to piece together helped maintain my interest longer than the other tech demos initially available.

The positional tracking and head tracking was low enough latency that there were a number of times when the mixed reality content did trick my subconscious brain into believing it was real. But the vast majority of the time, my rational brain knew that I was in an augmented reality experience. I imagine that it’ll be a lot more difficult for AR experiences to cultivate the same level of presence as VR because the latency and fidelity requirements are so much more higher, but I don’t think presence is necessarily the primary goal or motivation with AR. It’s more about adding a digital layer of value to your existing world rather than trying to completely transport you to another world.

For anyone who appreciates the level of immersion that virtual reality can provide, then the HoloLens is going to be pretty disappointing. But there are quite a number of unique affordances of AR that transcend the capability of VR that make the HoloLens a compelling platform.

Many analysts agree there will be many more market opportunities for augmented reality than virtual reality. There are many use cases where AR makes a lot more sense than VR. And while most of the innovation with virtual reality is coming from entrepreneurial start-ups and gaming, Raven argues that most of the innovation in AR will likely come from established Fortune 500 companies because there are a lot more immediately obvious business applications for AR than VR right now.

The HoloLens dev kits currently have two gestures of a finger click to select, and the exit “bloom” motion of having your palm upward and spreading your fingers apart. I found that the finger-clicking gesture was really fatiguing after doing 90 minutes of demos, and I’m glad to see that the development kit of the HoloLens is shipping with a remote clicker button. While a lot of people dream about the ability to do Minority Report style gestures as their primary user interface, the reality is that these motions can be quite fatiguing beyond doing them in short bursts.

There were a number of HoloLens demos where your primary method of manipulation was using the movements of your head to move objects around. While this may work in a short tech demo, I found it very fatiguing and frustrating for how imprecise my head is for moving objects around and I would’ve been much more efficient to do the same 3DUI tasks given a tracked six degree of freedom controller using my hands with buttons.

Raven did say that you will be able to connect 6-DOF controllers via Bluetooth to the HoloLens, and I expect that in the long run that most professional applications will start to use controllers with physical buttons rather than rely upon gestures. The voice interface can be quite nice, but I could also see how it might be difficult to use in shared office environments.

Finally, Raven says that there’s a lot of prototyping and development that you can start to do even if you don’t want to spend $3000 on a HoloLens development kit. Microsoft just announced the HoloLens emulator that allows you do build Unity project and start to preview your mixed reality application. There’s also a lot more new documentation and videos that was just released after last weeks’ Build conference.

If you’re in the Portland, OR area, then be sure to check out the monthly HoloLens Meetup that Raven founded, and you can learn more about their Object Theory’s latest news by following their blog.

Here’s an overview of Object Theory’s presentation on their Mixed Reality Collaboration Service:

Become a Patron! Support The Voices of VR Podcast Patreon

Theme music: “Fatality” by Tigoolio