There’s an intuitive appeal to using controller-free hand-tracking input like Leap Motion’s; there’s nothing quite like seeing your virtual hands and fingers move just like your own hands and fingers without the need to pick up and learn how to use a controller. But reaching out to touch and interact in this way can be jarring because there’s no physical feedback from the virtual world. When your expectation of feedback isn’t met, it can be unclear how to best interact with this new non-physical world. In a series of experiments, Leap Motion has been exploring how they can apply visual design to make controller-free input hand input more intuitive and immersive. Leap Motion’s Barrett Fox and Martin Schubert explain:

Guest Article by Barrett Fox & Martin Schubert

Barrett is the Lead VR Interactive Engineer for Leap Motion. Through a mix of prototyping, tools and workflow building with a user driven feedback loop, Barrett has been pushing, prodding, lunging, and poking at the boundaries of computer interaction.

Martin is Lead Virtual Reality Designer and Evangelist for Leap Motion. He has created multiple experiences such as Weightless, Geometric, and Mirrors, and is currently exploring how to make the virtual feel more tangible.

Barrett and Martin are part of the elite Leap Motion team presenting substantive work in VR/AR UX in innovative and engaging ways.

Exploring the Hand-Object Boundary in VR

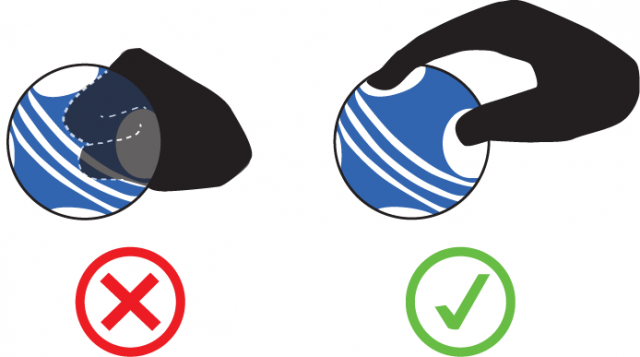

When you reach out and grab a virtual object or surface, there’s nothing stopping your physical hand in the real world. To make physical interactions in VR feel compelling and natural, we have to play with some fundamental assumptions about how digital objects should behave. This is usually handled by having the virtual hand penetrate the geometry of that object/surface, resulting in visual clipping. But how can we take these interactions to the next level?

With interaction sprints at Leap Motion, our team sets out to identify areas of interaction that developers and users often encounter, and set specific design challenges. After prototyping possible solutions, we share our results to help developers tackle similar challenges in their own projects.

For our latest sprint, we asked ourselves: could the penetration of virtual surfaces feel more coherent and create a greater sense of presence? To answer this question, we experimented with three approaches to the hand-object boundary.

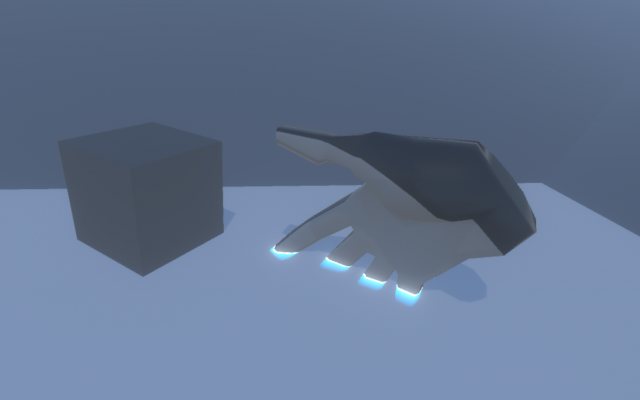

Experiment #1: Intersection and Depth Highlights for Any Mesh Penetration

For our first experiment, we proposed that when a hand intersects some other mesh, the intersection should be visually acknowledged. A shallow portion of the occluded hand should still be visible but with a change color and fade to transparency.

This execution felt really good across the board. When the glow strength and and depth were turned down to a minimum level, it seemed like an effect which could be universally applied across an application without being overpowering.

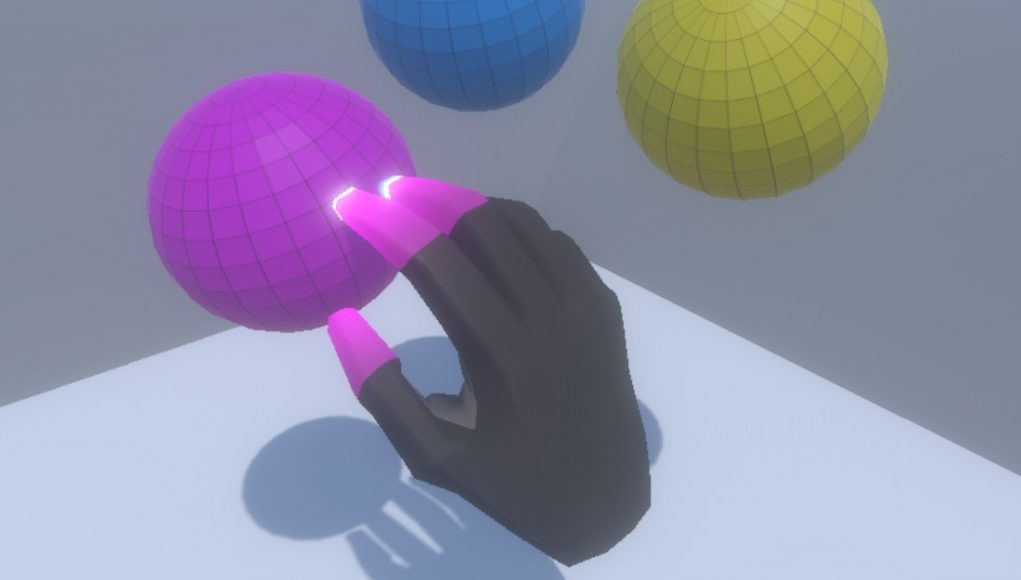

Experiment #2: Fingertip Gradients for Proximity to Interactive Objects and UI Elements

For our second experiment, we decided to make the fingertips change color to match an interactive object’s surface, the closer they are to touching it. This might make it easier to judge the distance between fingertip and surface making us less likely to overshoot and penetrate the surface. Further, if we do penetrate the mesh, the intersection clipping will appear less abrupt – since the fingertip and surface will be the same color.

This experiment definitely helped us judge the distance between our fingertips and interactive surfaces more accurately. In addition, it made it easier to know which object we were closest to touching. Combining this with the effects from Experiment #1 made the interactive stages (approach, contact, and grasp vs. intersect) even clearer.

Experiment #3: Reactive Affordances for Unpredictable Grabs

How do you grab a virtual object? You might create a fist, or pinch it, or clasp the object. Previously we’ve experimented with affordances – like handles or hand grips – hoping these would help guide users in how to grasp them.

In Weightless: Training Room the projectiles have indentations which afford more visually coherent grasping. This also makes it easier for users to reliably release the objects in a throw.

While this helped many people rediscover how to use their hands in VR, some users still ignore these affordances and clip their fingers through the mesh. So we thought – what if instead of modeling static affordances we created reactive affordances which appeared dynamically wherever and however the user chose to grip an object?

Three raycasts per finger (and two for the thumb) that check for hits on the sphere.

Bloop! The dimple follows the finger wherever it intersects the sphere.

In a variation on this concept, we tried adding a fingertip color gradient. This time, instead of being driven by proximity to an object, the gradient was driven by the finger depth inside the object.

Pushing this concept of reactive affordances even further we thought what if instead of making the object deform in response to hand/finger penetration, the object could anticipate your hand and carve out finger holds before you even touched the surface?

Basically, we wanted to create virtual ACME holes.

To do this we increased the length of the fingertip raycast so that a hit would be registered well before your finger made contact with the surface. Using two different meshes and a rendering rule, we create the illusion of a moveable ACME-style hole.

These effects made grabbing an object feel much more coherent, as though our fingers were being invited to intersect the mesh. Clearly this approach would need a more complex system to handle objects other than a sphere – for parts of the hands which are not fingers and for combining ACME holes when fingers get very close to each other. Nonetheless, the concept of reactive affordances holds promise for resolving unpredictable grabs.

Hand-centric design for VR is a vast possibility space—from truly 3D user interfaces to virtual object manipulation to locomotion and beyond. As creators, we all have the opportunity to combine the best parts of familiar physical metaphors with the unbounded potential offered by the digital world. Next time, we’ll really bend the laws of physics with the power to magically summon objects at a distance!