Facebook published new research today which the company says shows the “thinnest VR display demonstrated to date,” in a proof-of-concept headset based on folded holographic optics.

Facebook Reality Labs, the company’s AR/VR R&D division, today published new research demonstrating an approach which combines two key features: polarization-based optical ‘folding’ and holographic lenses. In the work, researchers Andrew Maimone and Junren Wang say they’ve used the technique to create a functional VR display and lens that together are just 9mm thick. The result is a proof-of-concept VR headset which could truly be called ‘VR glasses’.

The approach has other benefits beyond its incredibly compact size; the researchers say it can also support significantly wider color gamut than today’s VR displays, and that their display makes progress “toward scaling resolution to the limit of human vision.”

Let’s talk about how it all works.

Why Are Today’s Headsets So Big?

It’s natural to wonder why even the latest VR headsets are essentially just as bulky as the first generation of headsets that launched back in 2016. The answer is simple: optics. Unfortunately the solution is not so simple.

Every consumer VR headset on the market uses effectively the same optical pipeline: a macro display behind a simple lens. The lens is there to focus the light from the display into your eye. But in order for that to happen the lens need to be a few inches from the display, otherwise it doesn’t have enough focusing power to focus the light into your eye.

That necessary distance between the display and the lens is the reason why every headset out there looks like a box on your face. The approach is still used today because the lenses and the displays are known quantities; they’re cheap & simple, and although bulky, they achieve a wide field of view and high resolution.

Many solutions have been proposed for making VR headsets smaller, and just about all of them include the use of novel displays and lenses.

The new research from Facebook proposes the use of both folded optics and holographic optics.

Folded Optics

What are folded optics? It’s not quite what it sounds like, but once you understand it, you’d be hard pressed to come up with a better name.

While the simple lenses in today’s VR headsets must be a certain distance from the display in order to focus the light into your eye, the concept of folded optics proposes ‘folding’ that distance over on itself, such that the light still traverses the same distance necessary for focusing, but its path is folded into a more compact area.

You can think of it like a piece of paper with an arbitrary width. When you fold the paper in half, the paper itself is still just as wide as when you started, but it’s width occupies less space because you folded it over on itself.

But how the hell do you do that with light? Polarization is the key.

It turns out that beams of light have an ‘orientation’. Normally the orientation of light beams at random, but you can use a polarizer to only let light of a specific orientation pass through. You can think of a polarizer like the coin-slot on a vending machine: it will only accept coins in one orientation.

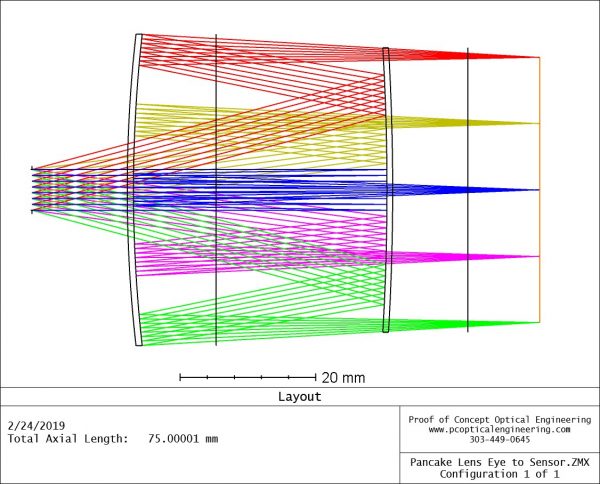

Using polarization, it’s possible to bounce light back and forth multiple times along an optical path before eventually letting it out and into the wearer’s eye. This approach (also known as ‘pancake optics’ allows the lens and the display to move much closer together, resulting in a more compact headset.

But to go even thinner—to shrink the size of the lenses themselves—Facebook researchers have turned to holographic optics.

Holographic Optics

Rather than using a series of typical lenses (like the kind found in a pair of glasses) in the folded optics, the researchers have formed the lenses into… holograms.

If that makes your head hurt, everything is fine. Holograms are nuts, but I’ll do my best to explain.

Unlike a photograph, which is a recording of the light in a plane of space at a given moment, a hologram is a recording of the light in a volume of space at a given moment.

When you look at a photograph, you can only see the information of the light contained in the plane that was captured. When you look at a hologram, you can look around the hologram, because the information of the light in the entire volume is captured (also known as a lightfield).

Now I’m going to blow your mind. What if when you captured a hologram, the scene you captured had a lens in it? It turns out, the lens you see in the hologram will behave just like the lens in the scene. Don’t believe me? Watch this video at 0:19 at look at the magnifying glass in the scene and watch as it magnifies the rest of the hologram, even though it is part of the hologram itself.

This is the fundamental idea behind Facebook’s holographic lens approach. The researchers effectively ‘captured’ a hologram of a real lens, condensing the optical properties of a real lens into a paper-thin holographic film.

So the optics Facebook is employing in this design is, quite literally, a hologram of a lens.