A prototype from Facebook Reality Labs researchers demonstrates a novel method for text input with controllerless hand-tracking. The system treats the hands and fingers as a sort of predictive keyboard which uses pinching gestures to select groups of letters.

Text input is crucial to many productivity tasks and it’s something which is still a challenge inside of AR and VR headsets. Yes, you can sit in front of a keyboard, but with a VR headset on you won’t be able to see the keyboard itself. For some very good typists, this isn’t an issue, but for most people it makes typing especially challenging. Even for good typists (or for AR headsets where the keyboard is visible), the need to sit in front of a keyboard keeps you chained to a desk, drastically reducing the freedom that you’d otherwise have with a fully tracked headset.

Voice input is one option, but problematic for several reasons. For one, it lacks discretion and privacy—anyone standing near you would not only have to hear you talk, but they would also hear the entire contents of your input. Another issue is that dictation is a somewhat different mode of thought than typing, and not as well suited for many common writing tasks.

Virtual keyboards are another option—where you use your fingers to poke at floating keys—but they’re too slow for serious writing tasks and lack physical feedback.

Facebook Reality Labs researchers have created a hand-tracking text input prototype, designed for AR and VR headsets, which throws out the keyboard as we know it.

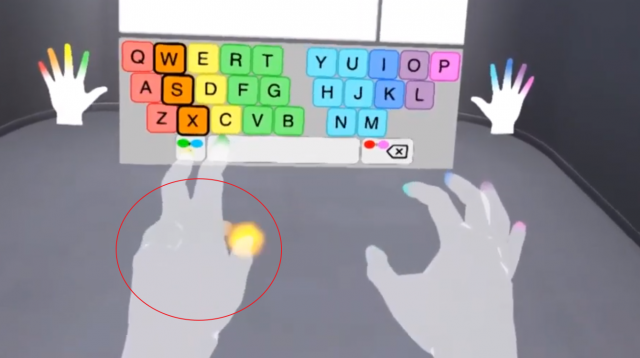

Instead of touching keys on the keyboard, groups of keys are mapped to each finger. Instead of selecting a specific letter, you pinch with the finger corresponding to whichever color-coded group contains the desired key. As you go, the system attempts to predict which word you want based on context, similar to a mobile swiping keyboard. The researchers call the system PinchType.

PinchType overcomes many of the issues with typical virtual keyboards and voice input. It’s quiet, private, and looks to be much faster than hunt-and-peck on a floating virtual keyboard. It also provides feedback because you can feel when you touch your fingers together.

The researchers shared some initial findings from testing the system:

In a preliminary study with 14 participants, we investigated PinchType’s speed and accuracy on initial use, as well as its physical comfort relative to a mid-air keyboard. After entering 40 phrases, most people reported that PinchType was more comfortable than the mid-air keyboard. Most participants reached a mean speed of 12.54 WPM, or 20.07 WPM without the time spent correcting errors. This compares favorably to other thumb-to-finger virtual text entry methods.

But there’s some downsides. The system relies on accurate hand-tracking, and one of the most challenging facets of it—as seen from a head-mounted camera, it’s very common for fingers to be occluded by the back of the hand. Below, you can see that—as seen from the viewpoint—it’s ambiguous if the user is using their pinky or ring finger for the tap.

It’s very likely that the PinchType prototype was developed using high-end hand-tracking tech with external cameras (to remove sub-par accuracy from the equation). We’ll have to wait for the full details of the system to be published to know if the researchers believe these occluded cases present an issue for an inside-out hand-tracking system.

The PinchType prototype is the work of Facebook Reality Labs researchers Jacqui Fashimpaur, Kenrick Kin, and Matt Longest. The work was presented under the title Text Entry for Virtual and Augmented Reality Using Comfortable Thumb to Fingertip Pinches.

The work was published as part of CHI 2020, a conference focused on human-computer interaction.