Oculus’ PC SDK version 1.19 now supports NVIDIA’s VRWorks Lens Matched Shading (LMS) technique, offering “a performance boost and a slight quality improvement” on supported GPUs.

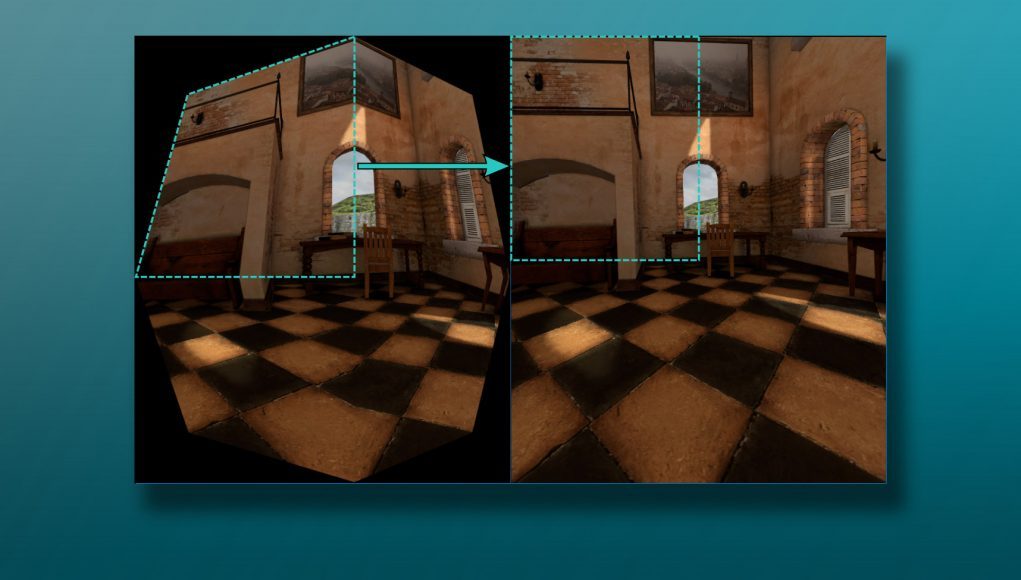

Lens Matched Shading is part of NVIDIA’s VRWorks toolset, and takes advantage of the architecture of the company’s Pascal-based GPUs to better match the pre-warped VR image to the final output image, thereby reducing the rendering of pixels that would otherwise not be visible after the distortion-correction phase. LMS also provides a more even distribution of pixel sampling between the initially rendered image and what ends up being seen through the lens. Back in 2016, NVIDIA offered an approachable explanation of LMS.

This week, Oculus announced the addition of LMS to their PC SDK 1.19. The company deeply details the implementation in depth at their developer blog, and said that the feature “provides a performance boost and a slight quality improvement.”

NVIDIA’s LMS has been available in special builds of Unity and Unreal Engine for some time, but now that it’s built directly into Oculus’ SDK, developers working outside of those engines will have easier access to the feature, and it’s likely that Unity and Unreal Engine will see ongoing support for LMS in their main branch releases, making it more accessible to developers.

LMS relies on GPUs based on NVIDIA’s Pascal architecture (GTX 1060 and Quadro P4000, and above). With 92.2% of Rift users using NVIDIA GPUs (65.8% Pascal-based), according to Oculus’ hardware report at the time of writing, the addition of LMS seems like a pragmatic way for the company to bring performance gains to many of its users, though investing in NVIDIA-specific technologies certainly doesn’t curry favor with AMD and its users.