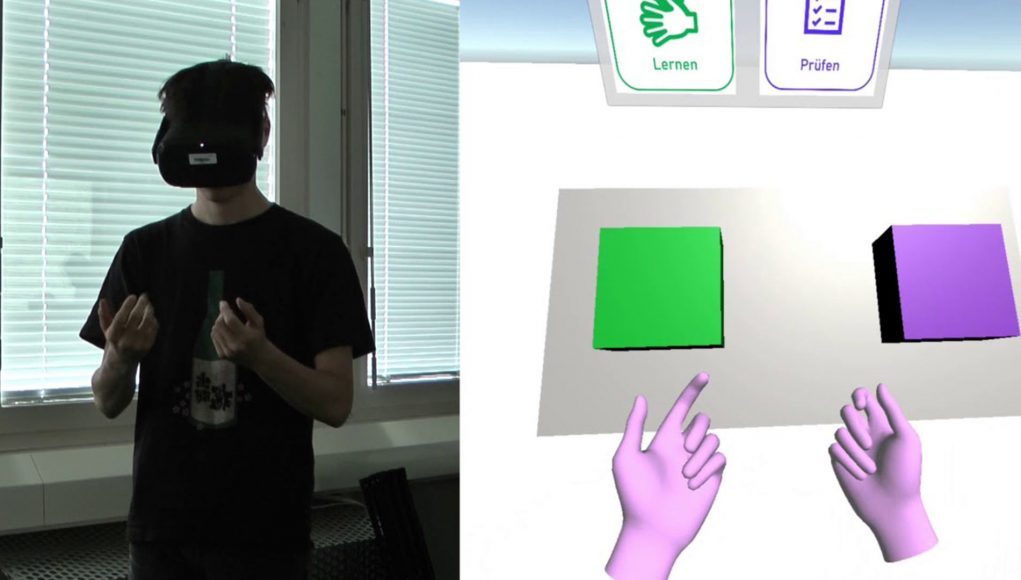

There’s no comprehensive app out there yet for learning sign language in VR, however a student at the computer science department of the Bern University of Applied Science is using Oculus Quest’s native hand-tracking to see just how close we might get to achieving that goal with today’s tech.

Cédric Girardin, an apprentice at the Switzerland-based university’s Computer Perception and Virtual Reality research group (cpvrLab), created a basic Quest app that can recognize and teach users up to 23 hand signs from the German fingerspelling alphabet.

Every sign that you can perform is recognized and displayed in 3D; the application analyzes your hand in realtime and validates whether the user is performing the sign correctly or not.

As Girardin’s final project, the so-called ‘VR-Trainingsapplication for finger alphabet’ is still limited to the German fingerspelling method, however Girardin intends on making it open source soon.

Girardin says in a Reddit post that the application compares a saved hand model with the current hand pose, making it technically possible to add “any type of handsign [sic] to the application and it will validate it.”

If you want to try it our for yourself, it’s actually available for free to sideload on Quest via SideQuest, the unofficial library of Quest games, experiences, and tools. Follow this handy guide on how to sideload apps on Quest to try it out for yourself.

More Robust Sign Language in VR?

VR users have been communicating in a simplified version of sign language for some time now. However deaf users have had trouble with the current state of VR, such as VR Chat user ‘Quentin’ who is a volunteer translator and hearing person.

Speaking to 2 Girls, 1 Podcast, Quentin explains that because VR controllers don’t allow for full finger tracking that accurate signing simply isn’t possible. This, Quentin says, has birthed new signs so deaf users can still communicate among each other in VR for now, however more development is still required so users can express themselves in their native, non-modified sign language.

With the existence already of several types of sign language divided by language groups, and in some cases region, a more accurate input method is needed so it doesn’t further complicate matters.

That said, optical hand-tracking like that seen on Quest isn’t a perfect method, but it does seem to address some of the issues seen in the deaf VR community thus far. Check out Quentin and a few other VR Chat signers in action below: