The FIFA World Cup finals are nearly here, and while we still live in a time where most everyone interested in the France vs. Croatia match will be glued to a TV set, researchers from the University of Washington, Facebook, and Google just gave us a prescient look at what an AR soccer match could look like in the near future.

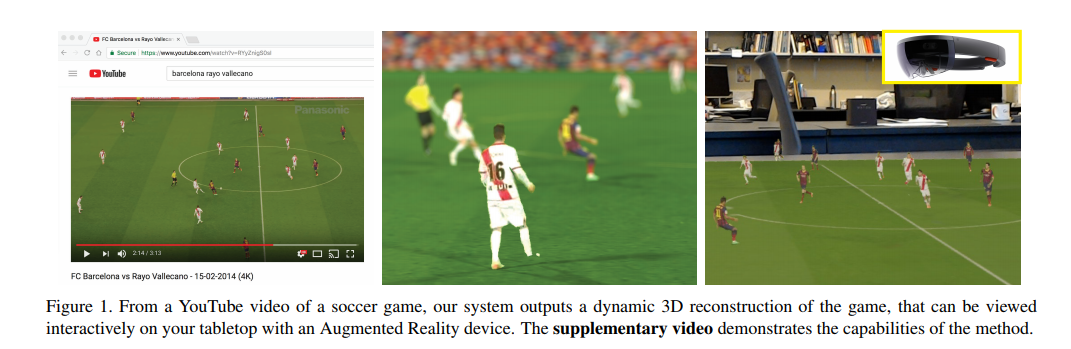

The researchers have devised an end-to-end system to create a moving 3D reconstruction of a real soccer match, which they say in their paper can be viewed with a 3D viewer or an AR device such as a HoloLens. They did it by training their convolutional neural network (CNN) with hours of virtual player data captured from EA’s FIFA video games, which essentially gave the team the data needed to ingest a single monocular YouTube video and output it into a sort of 2D/3D hybrid.

Researchers involved in the project are University of Washington’s Konstantinos Rematas, Ira Kemelmacher-Shlizerman (also Facebook), Brian Curless, and Steve Seitz (also Google).

There are a few caveats currently that should temper your expectations of seeing a ‘perfect’ 3D reconstruction that you could watch from any angle: currently players are still projected as 2D textures, positioning of the individual players is still a bit jittery, and the ball isn’t tracked either—an indispensable part of the equation that’s coming in the future, the team says. Also, because it’s based on single monocular shots, occlusion is an issue too, as players’ movements are hidden from the camera and their texture disappears from view.

The implication of watching a (nearly) live soccer match in AR is still pretty astounding though, especially on your living room coffee table.

“There are numerous challenges in monocular reconstruction of a soccer game. We must estimate the camera pose relative to the field, detect and track each of the players, re-construct their body shapes and poses, and render the combined reconstruction,” the team writes.

Viewing live matches won’t be possible for a while either, the team says. To watch a full match on an AR device such as a HoloLens, the system still requires a real-time reconstruction method and a method for efficient data compression and streaming to deliver it to your AR headset.

Because the system relies on standard footage, it represents a sort of low-hanging fruit of what’s possible now with current capture tech. Even though it’s based on 4K video, there are still unwanted artifacts such as chromatic aberration, motion blur, and compression artifacts.

Ideally, a stadium would be outfitted with multiple cameras with the specific purpose of AR capture for the best possible outcome—not the overall goal of the paper, but it’s a definite building block on the way to live 3D sports in AR.

The team’s research will be presented at the Computer Vision and Pattern Recognition conference, which is taking place June 18-22 in in Salt Lake City, Utah.