Outside of a few demonstrations at tradeshows, Meta has only give glimpses of the content on its impressive AR headset dev kit in short clips. During a recent visit to the company’s Silicon Valley headquarters to catch the latest improvements to the headset, I got to see a selection of demo content which Meta has allowed Road to VR to capture and share in full for the first time.

Having initially taken pre-orders for the $3,000 Meta Pro glasses back in 2013, the company rebooted the headset and revealed it in early 2016 as the Meta 2, this time poised as a more affordable (at $949) development kit with a much wider and more immersive field of view at 90 degrees (compared to 36 degrees on the original). After taking pre-orders throughout 2016, Meta tells Road to VR that the Meta 2 dev kit is shipping in limited quantities, and the company expects to ramp up shipments in the months ahead.

We went hands-on with the Meta 2 back at its reveal in early 2016 and concluded that while it’s AR in general is still early in development compared to VR (a sentiment shared by Facebook), the headset could play a significant role in the development of the eventual consumer technology:

Many people right now think that the VR and AR development timelines are right on top of each other—and it’s hard to blame them because superficially the two are conceptually similar—but the reality is that AR is, at best, where VR was in 2013 (the year the DK1 launched). That is to say, it will likely be three more years until we see the ‘Rifts’ and ‘Vives’ of the AR world shipping to consumers.

Without such recognition, you might look at Meta’s teaser video and call it overhyped. But with it, you can see that it might just be spot on, albeit on a delayed timescale compared to where many people think AR is today.

Like Rift DK1 in 2013, Meta 2 isn’t perfect in 2016, but it could play an equally important role in the development of consumer augmented reality.

At the time we weren’t able to share an in-depth look at what it was actually like to use the headset, save for a few short clips taken from promotional videos released by the company. Now, running on the shipping version of the development kit, Meta has exclusively allowed us to capture and share the full length of several of the headset’s demo experiences, blemishes and all.

A few notes to make sense of the videos below:

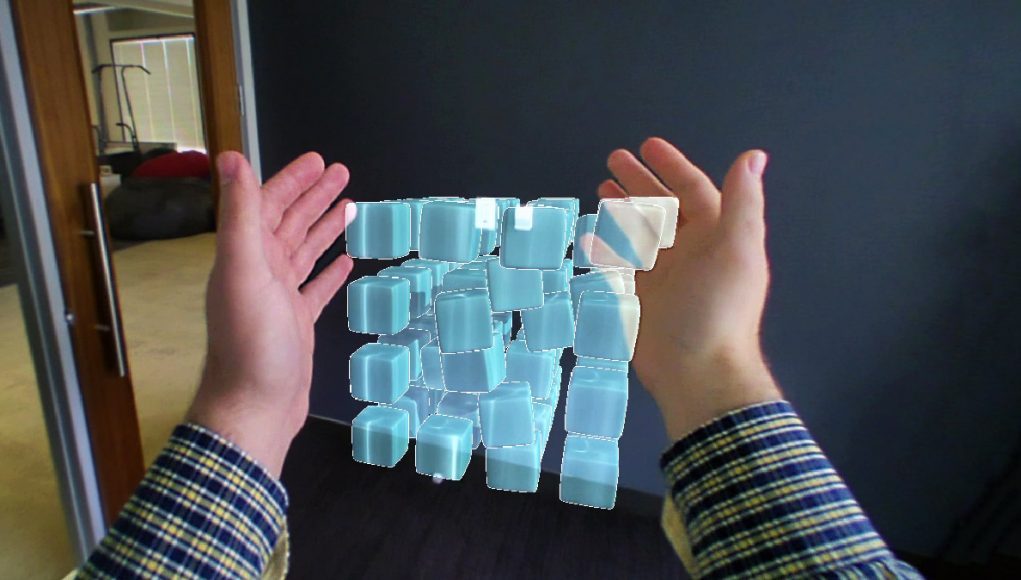

- The footage is captured with the Meta 2’s front-facing camera, and then overlaid with the AR content, so this isn’t precisely what it looks like through the headset’s lens itself, but it’s pretty darn close.

- The real resolution actually looks a lot sharper than what you’re seeing here; all the text in the demos was quite readable. For comparison, the captures are done at 1280×720 (and not at a very good bit-rate), while the Meta 2 provides a 1280×1440 per-eye resolution.

- The AR content is rendered to be correct for my perspective, not the camera’s. That means that when I reach out to touch things it sometimes looks like my hand is way off from the camera’s vantage point, but to me it actually looks like I’m touching the objects directly (which feels surprisingly cool when you reach out and it’s right where you think it is).

- Occlusion is not rendered in these videos, though it is present in the view through the headset, so while it looks like I’m reaching behind some objects (also an issue of the above point), there’s some (rough) occlusion happening in my view which, combined with stereoscopy, maintains the illusion that the objects are actually behind my hands and out into the real world.

- The tracking fidelity and latency seen in the videos is a fair representation of the current latency seen through the lens itself.

- You will see some moments when I clip through the AR content, though the clipping doesn’t align with my own view through the headset due to the camera’s different perspective.

- The picture-in-picture video from the outside view is hand-synchronized and isn’t representative of actual latency.

- The field of view seen here is technically representative of what it looks like in the headset, except that the field of view of the human eye is much wider than what the camera captures; thus while the AR content can be seen here stretching across the entire width of the camera’s captured field of view, it doesn’t stretch across the entire human field of view. That said, it’s quite wide and much more compelling compared to a lot of other AR headsets out there.

- There’s some occasional jumpiness in the framerate of the capture (especially during the brain scene), likely due to the capture process struggling to keep up; through the headset the scenes were perfectly smooth.

Meta 2 Evolving Brain Demo

Meta 2 Cubes, Earth, and Car Demo

Take Away

Meta 2’s biggest strength is its wide field of view and its biggest weakness is tracking fidelity (both latency and jitter). I’ve often said that if you mashed together HoloLens’ tracking and Meta’s field of view, you’d have something truly compelling. Meta said they would ship with “excellent” inside-out tracking, but as of today they aren’t there yet.

That said, this is a development kit. The company tells me that there’s still optimization to be done along the render pipeline, including the use of timewarp—an important latency-reducing technique—which is not currently implemented.

Like the Rift DK1 back in 2013—it didn’t have perfect head tracking or low enough latency, but it was still enough to stir the imaginations of developers and get the ball rolling on AR app development. Meta knows that their tracking isn’t consumer ready yet, but the company intends to get there (and they seem committed to doing it in-house). Meanwhile, the Meta 2 could serve as that kick-in-the-imagination that gets a core community developers excited about the possibilities of AR and actually starting to build for the platform.

For a deeper dive into our hands-on time with Meta 2, be sure to see our prior writeup.