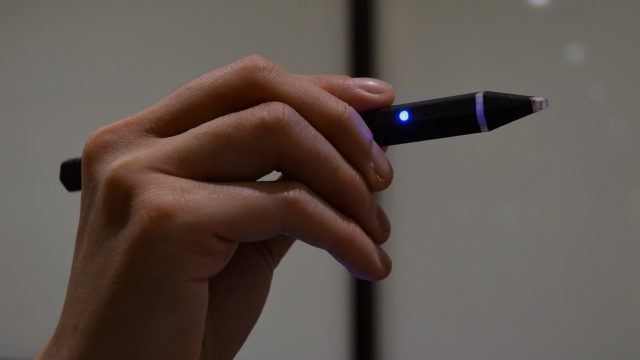

Massless is developing a stylus designed specifically for high-precision VR input. We got to check out a prototype version of the device this week at GDC 2018.

While game-oriented VR controllers are the norm as far as VR input today is concerned, Massless hopes to bring another option to the market for use cases which benefit from greater precision, like CAD. Controllers like Oculus’ Touch and HTC’s Vive wands are quite precise, but they are articulated primarily by our wrists, and miss out on the fine-grain control that comes from our fingers—when you write on a piece of paper, notice how much more your fingers are in control of the movements vs. your wrist. This precession is amplified by the fact that the tabletop surface acts as an anchor for your finger movements. Massless has created a tracked stylus with the goal of bringing the precision of writing implements into virtual reality, with a focus on enterprise use-cases.

At GDC I saw a working, 3D printed prototype of the Massless Pen working in conjunction with the Oculus Rift headset. The system uses a separate camera, aligned with the Rift’s sensor, for tracking the tip of the stylus. With the stylus held in my left hand, and a Touch controller in my right, a simple demo application placed me into an empty room where I could see the tip of the pen moving around in front of me. I could draw in the air by holding a button on the Touch controller and waving the stylus through the air. I could also use the controller’s stick to adjust the size of the stroke.

Using the Massless Pen felt a lot like drawing in the air with an app like Tilt Brush, but I was also able to write tiny letters quite easily; without a specific task comparison, or objective means of measurement between controller and stylus though, it’s tough to assess the precision of the pen by just playing with it, other than to say that it feels at least as precise as Touch and Vive controllers.

Since the ‘action’ of writing in real life is initiated ‘automatically’ when your writing implement touches the writing medium, it felt a little awkward to have to press a button (especially on my other hand) in order to initiate strokes. Of course, the Massless Pen itself could have a button on it (so at least it might feel a little more natural since the stroke initiation would happen in the same hand as the writing action), but the company says they’ve steered away from that because the action of pressing a button on the pen itself would cause it to move slightly, working against the precision they are attempting to maintain.

If you’ve ever used one of a million trigger-activated laser-pointed interfaces in VR, you’ll know that this is actually a fair point, as pointing with a laser and then using the controller’s trigger to initiate an action causes the laser to move significantly (especially as it’s amplified by leverage). It felt weird using my other hand to initiate strokes at first, but I feel fairly confident that this would begin to feel natural over time, especially considering that many professional digital artists use drawing tablets where they draw on one surface (the tablet) and see it appear on other (the monitor).

Inside the demo I could see the white outline of a frustum projected from a virtual representation of the Rift sensor in front of me. The outline was a visual representation of the trackable area of the Massless Pen’s own sensor, and it was relatively narrow compared to the Rift’s own tracking. If I moved the stylus outside the edge of the outline, it would stop tracking until I brought it back into view. As Massless continued to refine their product, I hope the company is prioritizing growing the trackable area to be more comparable to the headset and controller that it’s being used with.

While the Massless Pen prototype I used has full positional tracking, it lacks rotational tracking at the moment, meaning it can only create strokes from a singular point, and can’t yet support strokes that would benefit from tilt information, though the company plans to support rotation eventually.

More so than drawing in the air, I’m interested in VR stylus input because of what it could mean for text input handwritten on an actual surface (rather than arbitrary strokes in the air); history bred the stylus for this use-case, and they could become a key tool for productivity in VR. Drawing broad strokes in the air is nice, but writing benefits greatly from using the writing surface as an anchor for your hand, allowing your dexterous fingers to do the precision work; for anything but course annotations, if you’re planning to write in VR, it should be done against a real surface.

To see what that might be like with the Massless Pen, I tried my hand at writing ‘on’ the surface of the table I was sitting at. After sketching a few lines (as if trying to color in a shape) I leaned down to see how consistently the lines aligned with the flat surface of the table. I was surprised at the flatness of the overall sketched area (which suggests fairly precise, well calibrated tracking), but did note that the shape of the individual lines showed regular bits of tiny jumpiness (suggesting local jitter). Granted, this is to be expected—Massless says they haven’t yet added ‘surface sensing’ to the pen (though they plan to), which could reasonably be used to eliminate jitter during real surface writing entirely, since they could have a binary understanding of whether or not the pen is touching a real surface, and use that information to ‘lock’ the stroke to one plane.

The Massless Pen is interesting for in-air input, but since the stylus was born for writing on real surfaces, I hope the company increases its focus in that area, and allows 3D drawing and data manipulation to evolve as a natural, secondary extension of handwritten VR input.