Magic Leap, the mysterious AR startup with a multiple-billion dollar valuation, still doesn’t have a headset to show the world, but in a recent paper published by Magic Leap researchers entitled Toward Geometric Deep SLAM, we get a peek into a novel machine vision technique that aims to bring the company closer to their goal of creating a robust standalone AR headset.

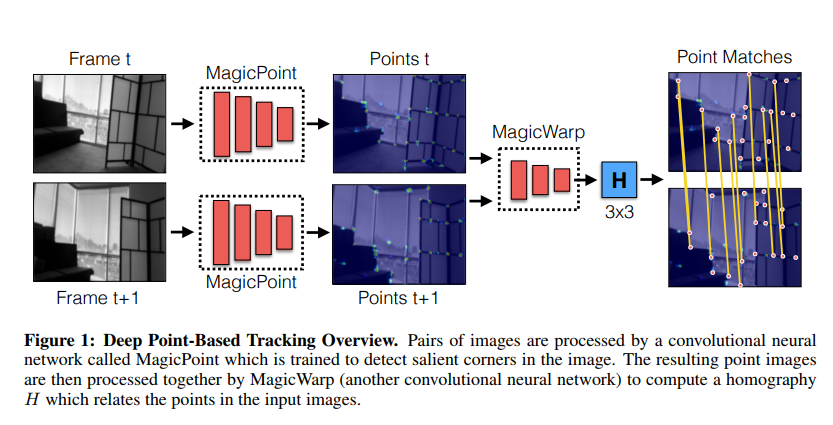

Authored by Magic Leap researchers Daniel DeTone, Tomasz Malisiewicz, and Andrew Rabinovich, the paper describes a tracking system powered by two deep convolutional neural networks (CNNs)—a type of artificial ‘brain’ used for image processing. Called MagicPoint and MagicWarp, the researchers contend the two CNNs allow for a system that’s “fast and lean, easily running 30+ FPS on a single CPU.”

Here’s the quick and dirty: According to the paper, MagicPoint operates on single images and creates 2D points important to the purpose of tracking, with these points destined to be fed into a simultaneous localization and mapping (SLAM) visual algorithm. Comparing their network to classical point detectors, the team discovered “a significant performance gap in the presence of image noise.”

Because calculating the shape of objects as they move around isn’t an easy task—it could be either the object or the viewer moving—MagicWarp’s job is to use a pair of these images containing the 2D points generated by MagicPoint to essentially predict motion as it models the world around it. The MagicWarp SLAM algorithm does this in a different way from traditional approaches because it only uses the point’s location and not the more complicated ‘local point descriptors’, a term used in computer vision jargon that describes a thing containing coded, unique identifying information.

Tested using physical and synthetic data, the two convolutional neural networks are said to be capable of running in realtime. “We believe that the day of massive-scale deployment of Deep-Learning powered SLAM systems is not far,” the authors conclude.

If your brain isn’t already spinning, check out the full paper here.

So while we don’t have a clear idea of exactly when Magic Leap will have a public prototype of their light field display-packing headset, or what CEO Rony Abovitz teases as “small, mobile, powerful and pretty cool,” we’ll take anything we can get after more than 3 years of waiting. Anything but their post-cool, pre-factual marketing campaign, that is.