Hand-tracking on Quest rolled out as an experimental feature in late 2019, but Oculus is letting it gestate before it will accept third-party apps with hand-tracking. In the meantime, the company has published fresh developer documentation which establishes best practices for working within the limitations of Quest hand-tracking.

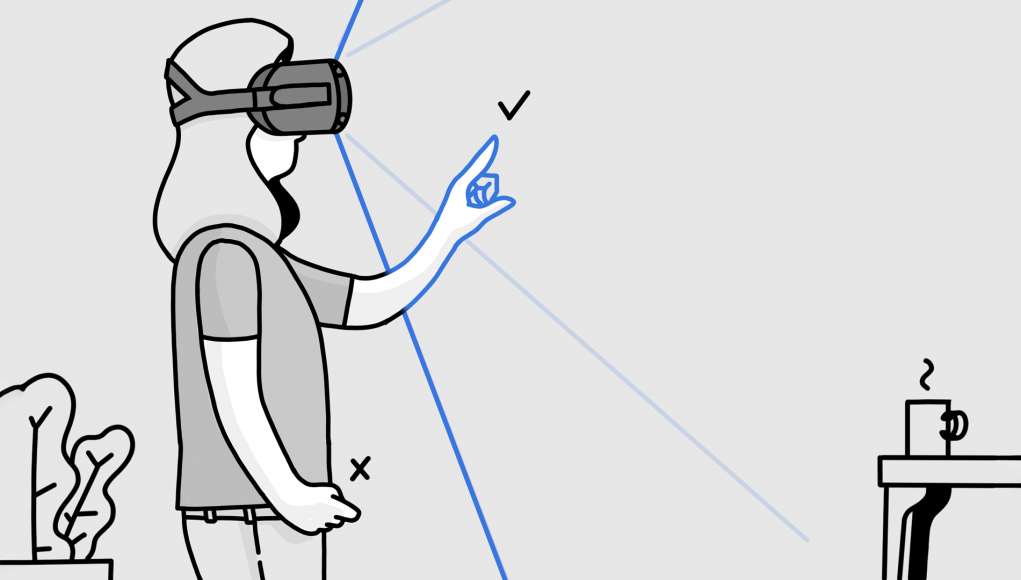

Hand-tracking brings many benefits to Quest, especially ease-of-use. And while Oculus’ first stab at the feature is reasonably solid, there’s still limitations around accuracy, latency, pose detection, and tracking coverage. To help developers best work within the limitations of the system, a new section of the Oculus developer documentation called ‘Designing for Hands‘ offers up practical advice and considerations.

“In these guidelines, you’ll find interactions, components, and best practices we’ve validated through researching, testing, and designing with hands. We also included the principles that guided our process,” the documentation says. “This information is by no means exhaustive, but should provide a good starting point so you can build on what we’ve learned so far. We hope this helps you design experiences that push the boundaries of what hands can do in virtual reality.”

The document notes the challenges that come with the territory, and reminds developers to “remember that hands aren’t controllers.”

There are some complications that come up when designing experiences for hands. Thanks to sci-fi movies and TV shows, people have exaggerated expectations of what hands can do in VR. But even expecting your virtual hands to work the same way your real hands do is currently unrealistic for a few reasons.

- There are inherent technological limitations, like limited tracking volume and issues with occlusion

- Virtual objects don’t provide the tactile feedback that we rely on when interacting with real-life objects

- Choosing hand gestures that activate the system without accidental triggers can be difficult, since hands form all sorts of poses throughout the course of regular conversation

You can find solutions we found for some of these challenges in our Best Practices section.

[…]

It’s very tempting to simply adapt existing interactions from input devices like the Touch Controller, and apply them to hand tracking. But that process will limit you to already-charted territory, and may lead to interactions that would feel better with controllers while missing out on the benefits of hands.

Instead, focus on the unique strengths of hands as an input and be aware of the specific limitations of the current technology to find new hands-native interactions. For example, one question we asked was how to provide feedback in the absence of tactility. The answer led to a new selection method, which then opened up the capability for all-new 3D components.

It’s still early days, and there’s still so much to figure out. We hope the solutions you find guide all of us toward incredible new possibilities.

The ‘Interactions‘ section of the document offers some of the most practical advice for how developers should consider allowing users to interact with the virtual world using hand-tracking.

A clear distinction is made between Absolute and Relative interactions; the former meaning objects directly touched by the user and controlled 1:1, with the latter being about how to control objects at a distance in discrete ways, like rotating an object around one axis.

The ‘User Interface Components‘ section makes specific suggestions about how things like buttons and menus should work, and how they should be sized to complement the accuracy of Quest’s hand-tracking. There’s also some examples shown of more complex interface modules, like toggle switches, radial selectors, and scrolling lists.

Oculus says they aren’t yet accepting hand-tracking applications onto Quest. In the future they plan to graduate hand-tracking from an experiment to a full fledged feature, and when they do they will open the door to apps which use the feature. The company hasn’t given any indication as to when that will happen, but we’d expect some time in 2020.

As for hand-tracking on Rift S—Oculus has only announced the feature for Quest and has not yet committed to bringing hand-tracking to Rift S.