At Connect 2021 last week, Meta revealed a new Quest rendering technology called Application Spacewarp which it says can increase the performance of Quest apps by a whopping 70%. While similar to the Asynchronous Spacewarp tech available to Oculus PC apps, Meta says Application Spacewarp will produce even better results.

Update (November 12th, 2021): Meta has now released Application Spacewarp for developers. The tools include support for Unity, Unreal Engine, and native applications. The company has also updated the OVRMetrics tool with Application Spacewarp metrics.

The original article, which overviews the Application Spacewarp rendering tech, continues below.

Original Article (November 5th, 2021): Given that Quest is powered by a mobile processor, developers building VR apps need to think carefully about performance optimization in order to hit the minimum bar of 72 FPS to match the headset’s 72Hz display. It’s even harder if they want to use the 90Hz or 120Hz display modes (which make apps look smoother and reduce latency).

Considering the high bar for performance on Quest’s low-powered hardware, anything that can help boost app performance is a boon for developers.

That’s why at Connect 2021 last week, Meta introduced a new Quest rendering technology called Application Spacewarp which it says can improve application performance by nearly 70%.

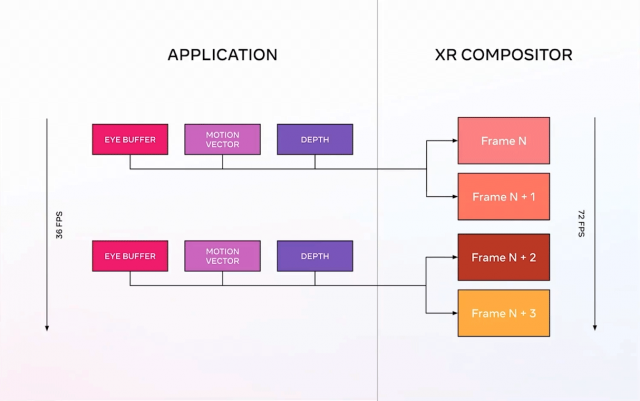

The technique achieves this by allowing applications to run at half-framerate (for instance, 36 FPS instead of 72 FPS), and then the system generates a synthetic frame, based on the motion in the previous frame, which is filled in every-other frame. Visually, the app appears to be running at the same rate as a full-framerate app, but only half of the normal rendering work needs to be done.

An application targeting 36 FPS has twice as much time for each frame to render compared to running at 72 FPS; that extra time can be spent by developers however they’d like (for instance, to render at a higher resolution, use better anti-aliasing, increase geometric complexity, put more objects on screen etc).

Of course, Application Spacewarp itself needs some of the freed up compute time to do its work. Meta, having tested the system with a number of existing Quest applications, says that the technique increases the render time available to developers by up to 70%, even after Application Spacewarp finishes its work.

Developer Control

Developers using Application Spacewarp can target 36 FPS for 72Hz display, 45 FPS for 90Hz, or 60 FPS for 120Hz.

Meta Tech Lead Neel Bedekar posits that 45 FPS for 90Hz display is the “sweet spot” for developers using Application Spacewarp because it requires less compute than the current minimum bar (45 FPS instead of 72 FPS) and results in a higher refresh rate (90Hz instead of 72Hz). That makes it a fairly easy ‘drop-in’ solution which makes the app run better without requiring any additional optimization.

Of course 60 FPS for 120Hz display would be even better from a refresh rate standpoint, but in this case a 60 FPS app using Application Spacewarp would require additional optimization compared to a native 72 FPS app (because of the overhead compute used by Application Spacewarp).

Meta emphasizes that Application Spacewarp is fully controllable by the developer on a frame-by-frame basis. That gives developers the flexibility to use the feature when they need it or disable it when it isn’t wanted, even on the fly.

Developers also have full control over the key data the goes into Application Spacewarp: depth-buffers and motion vectors. Meta says that this control can help developers deal with edge cases and even find creative solutions to best take advantage of the system.

Lower Latency Than Full Framerate

Combined with other techniques, Meta says that Quest applications using Application Spacewarp can have even lower latency than their full framerate counterparts (that aren’t using the extra tech).

That’s thanks to additional techniques available to Quest developers—Phase Sync, Late Latching, and Positional Timewarp—all of which work together to minimize the time between sampling the user’s motion input and displaying a frame.

Differences Between Application Spacewarp (Quest) and Asynchronous Spacewarp (PC)

While a similar technique has been employed previously on Oculus PC called Asynchronous Spacewarp, Meta Tech Lead Neel Bedekar says that the Quest version (Application Spacewarp) can produce “significantly” better results because applications generate their own highly-accurate motion vectors which inform the creation of synthetic frames. In the Oculus PC version, motion vectors were estimated based on finished frames which makes for less accurate results.

Application Spacewarp Availability

Application Spacewarp will be available to Quest developers beginning in the next two weeks or so. Meta is promising the technique will support Unity, Unreal Engine, and native Quest development right out of the gate, including a “comprehensive developer guide.”

Per the update at the top of the article, Application Spacewarp is now available to Quest developers.