Oculus Quest was the main attraction at Oculus Connect 5 this week. Following the high-end standalone headset’s reveal, attendees of the conference got to try several demos to experience the headset’s inside-out positional head & hand tracking, including in an ‘arena-scale’ setting. We also got a handful of interesting details about the headset’s specs and capabilities.

Oculus Quest (formerly Project Santa Cruz) is officially set to launch in Spring 2019, priced at $400. While that’s twice the cost of the company’s lower-end Go headset, it could certainly be worth it for the much more immersive class of games that comes with positional tracking (which the Go lacks). But that will only be true if the inside-out tracking tech, which Oculus calls ‘Insight’, can really deliver.

Quest ‘Insight’ Tracking

Insight seems to be shaping up to be the best inside-out head and hand-tracking that I’ve seen to date. I say “seems” because I haven’t had a chance to test the headset’s tracking in a non-demo environment. The tracking system relies on recognizing features of the surrounding environment to determine the headset’s position in space; if you played in an empty room with shiny, perfectly lit white walls, it probably wouldn’t work at all since there wouldn’t be sufficient feature density for Insight to know what’s going on. Demo environments are often set up as best-case scenarios, and for all I know something in my house (or anyone’s house) could really throw the system off.

Oculus claims they’re tuning the headset’s tracking to be robust in a wide range of scenarios, and as long as that remains consistently true, Insight will impress many. While other inside-out tracking systems either lack positional controller tracking entirely, or require some compromise on the size of the controller tracking volume, Quest’s four cameras, mounted on the corners of the headset, cover an impressively wide range that I effectively couldn’t defeat. A simple test I’ll often do with such systems is to move my outstretched arm as far outside of my own field of view as possible, then move it while (hoping to have lost the view of the tracking cameras), then bring it back into the tracking volume from some other angle. Generally I’m trying to see my hand ‘pop’ into existence at that new point of entry, as the cameras pick it up and realize it wasn’t where they saw it last.

Despite my efforts, I couldn’t manage to make this happen. By the time my hand came anywhere near my own field of view, the hand was already re-acquired and placed properly (if it had even left the tracking volume at all). I would need to design a special test (using something like a positional audio source emitted from my virtual hand) to find out if I was even able to get my hand outside of the tracking volume, short of putting it directly behind my head or back.

Despite my efforts, I couldn’t manage to make this happen. By the time my hand came anywhere near my own field of view, the hand was already re-acquired and placed properly (if it had even left the tracking volume at all). I would need to design a special test (using something like a positional audio source emitted from my virtual hand) to find out if I was even able to get my hand outside of the tracking volume, short of putting it directly behind my head or back.

So that means there’s vanishingly few situations where tracking is going to harm your gameplay, even in situations that would normally be cited as problematic for inside-out tracking systems, like throwing a frisbee or hip-firing a gun. Two specific scenarios that I haven’t had a chance to test just yet (but believe will be important to do so) is shooting a bow or aiming down sights with a two-handed weapon. In both scenarios, one of your hands typically ends up directly in front of, or next to your face/headset, which could be a challenging situation for the tracking system. I’ll certainly test those situations next time I have Quest on my head.

In any case, it feels like Oculus has done a very good job with Quest and its insight tracking system. Assuming Quest can achieve a consistently high level of robustness once as it finds itself across a huge variety of rooms and lighting situations, I think the vast majority of players wouldn’t be able to reliably tell the difference between Quest’s inside-out tracking and Rift’s outside-in tracking in a blind test.

And that has big benefits beyond just getting rid of the external trackers. For one, it means the device has 360 roomscale tracking by default rather than this being dependent on how many sensors a Rift user chooses to buy and how they decide to set them up. Additionally, it means players can easily play in much larger spaces than what was previously possible with the Rift.

At Connect I played a few demos with Quest, one of which was Superhot VR. The game was demoed in a larger-than-roomscale space (about the size of a large two car garage) and I was free to walk wherever I wanted within that area. When it came to hand-tracking, I played the game exactly like I’ve played it on the Rift many times before, without noticing any issues. Being used to tethered headsets, it was also incredibly freeing to take a few steps in one direction and not see a Guardian/Chaperone boundary, then simply keep walking for many more steps before needing to think about the outside world.

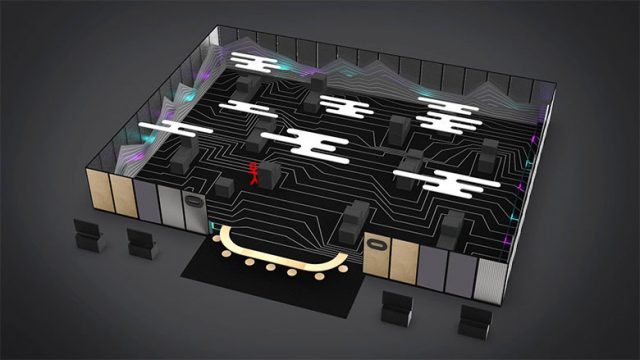

Oculus took this to the extreme at Connect in an experiment they put together to show how Quest tech is capable of ‘arena-scale’ tracking. They created a large arena space, about the size of a tennis court, and put six players wearing Quests inside to play a special version of Dead and Buried. Physical cover (boxes of varying sizes and shapes) covered the area, and players could physically walk anywhere around the arena and use the cover while shooting at the other team.

Through my time in this demo I didn’t see any jumping in the positional head tracking, even while I walked 10 to 15 feet at a time from one piece of cover to the next.

So, Quest is shaping up to deliver the same kind of quality positional tracking experience that most of us associate with high-end tethered headsets today, but now with more freedom and greater convenience. That’s a big deal at a $400 all-in price point.