Oculus Research’s director of computational imaging, Douglas Lanman, is scheduled to give a keynote presentation at SID DisplayWeek in May which will explore the concept of “reactive displays” and their role in unlocking “next-generation” visuals in AR and VR headsets.

Among three keynotes to be held during SID DisplayWeek 2018, Douglas Lanman, director of computational imaging at Oculus Research, will present his session titled Reactive Displays: Unlocking Next-Generation VR/AR Visuals with Eye Tracking on Tuesday, May 22nd.

Among three keynotes to be held during SID DisplayWeek 2018, Douglas Lanman, director of computational imaging at Oculus Research, will present his session titled Reactive Displays: Unlocking Next-Generation VR/AR Visuals with Eye Tracking on Tuesday, May 22nd.

The synopsis of the presentation reveals that Lanman will focus on eye-tracking technology and its potential for pushing VR and AR displays to the next level:

As personal viewing devices, head-mounted displays offer a unique means to rapidly deliver richer visual experiences than past direct-view displays occupying a shared environment. Viewing optics, display components, and sensing elements may all be tuned for a single user. It is the latter element that helps differentiate from the past, with individualized eye tracking playing an important role in unlocking higher resolutions, wider fields of view, and more comfortable visuals than past displays. This talk will explore the “reactive display” concept and how it may impact VR/AR devices in the coming years.

The first generation of VR headsets have made it clear that, while VR is already quite immersive, there’s a long way to go toward the goal of getting the visual fidelity of the virtual world to match human visual capabilities. Simply packing displays with more pixels and rendering higher resolution imagery is a straightforward approach but perhaps not as easy as it may seem.

Over the last few years, a combination of eye-tracking and foveated rendering technology has been proposed as a smarter pathway to greater visual fidelity in VR. Precise eye-tracking technology could understand exactly where users are looking, allowing for foveated rendering—rendering in maximum fidelity only at the small area in the center of your vision which sees in high detail, while keeping computational load in check by reducing the rendering quality in your less detailed peripheral vision. Hardware foveated display technology could even move the most pixel-dense part of the display to the center of the user’s gaze, potentially reducing the challenge (and cost) of cramming more and more pixels onto a single panel.

The same eye-tracking approach could be used to improve various lens distortions, no matter which direction the user is looking, which could improve visual fidelity and potentially make larger fields of view more practical.

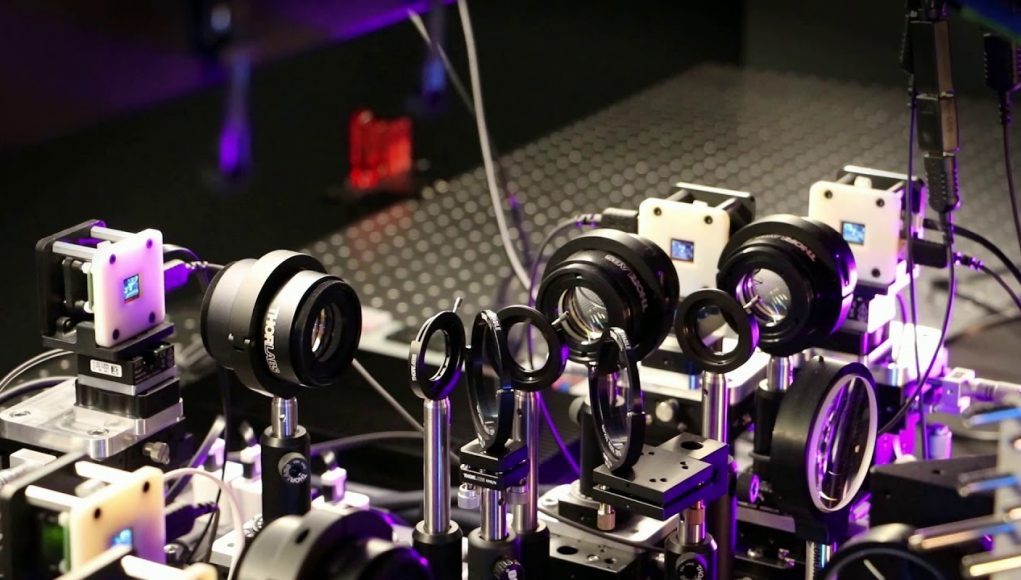

Lanman’s concept of a “reactive displays” sounds, at first blush, a lot like the approach that NVIDIA Research is calling “computation displays,” which they detailed in depth in a recent guest article on Road to VR. The idea is to make the display system itself, in a way, aware of the state of the viewer, and to move key parts of display processing to the headset itself in order to achieve the highest quality and lowest latency.

Despite the benefits of eye-tracking and foveated rendering, and some very compelling demonstrations, it still remains an area of active research, with no commercially available VR headsets yet offering a seamless hardware/software solution. So it will be interesting to hear Lanman’s assessment of the state of these technologies and their applicability to AR and VR headsets of the future.