Unity is making a huge push to position itself as the leading game engine in the virtual reality space today at its Vision Summit where they made a series of new partner announcements and previewed big improvements coming to their VR rendering pipeline.

Latency is one of the most critical aspects of a real-time virtual reality experience. With too much latency, the viewer’s virtual world won’t respond as quickly as the real world, and this can quickly lead to discomfort in VR. Reducing the so called ‘motion to photons’ latency—the time it takes from the instant a user moves their head until the VR world moves around them—down below 20 milliseconds is vital to a convincing and comfortable VR experience.

One big part of that pipeline is the time it takes to render each individual frame of a VR experience. When a user moves their head, that movement data needs to be sent to the computer, and the computer must run through a bunch of code an math in a matter of milliseconds to calculate what the next picture of the scene should look like.

More efficient rendering means that we can bring even better graphics into virtual reality without crossing that vital 20 millisecond threshold of latency.

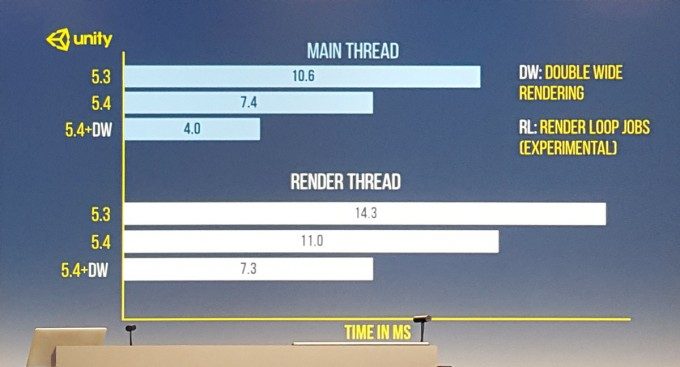

On stage today at the 2016 Vision Summit, Unity previewed major improvements they’re making that are expected to launch in Unity 5.4 in March.

On stage the company walked through some of the new render technology they’re implementing including Double Wide Rendering and Render Loop Jobs. Combined together, these technologies cut the main thread and render thread timings in half.

What this ultimately means is that developers can refill that time with better graphics, physics, and lightning than they were able to before.

Unity 5.4 is expected to launch in mid-March, but it isn’t clear yet how much of this new VR rendering development will make it into the launch of 5.4.