With the hype surrounding Apple and Google’s (admittedly very cool) AR tracking tech now in the hands of tens of millions of developers and users, you might be tempted to think that immersive augmented reality experiences—delivering the promise of wild AR concept videos we’ve seen over the last decade—are just around the corner. While we are closer than ever, the truth is there’s years of R&D and design work still standing between us and immersive AR for the mainstream. Here’s an overview of some of the key challenges being worked on today.

Immersive Field of View

It’s easy to watch cool ARKit videos and imagine that the full screen view you’re seeing on your computer monitor would take up your entire natural field of view. The reality is that even today’s best portable AR headset dev kits still have very limited fields of view (far from the field of view of today’s VR headsets, which some still feel is lacking!).

HoloLens—in many ways the best AR headset a developer can buy today—has a tiny ~34 degree diagonal field of view, far less even than Google Cardboard (at ~60 degrees diagonal). A video from our pal Oliver Kreylos compares a full field of view to a ~34 degree field, and the result is that you’re only seeing a sliver of the augmented reality world at any one moment:

And that’s important, because in order to achieve reasonable levels of immersion, the augmented world needs to seamlessly blend with the real world. Without being able to see the bulk of the augmented reality world at once, you’ll find yourself ‘scanning’ unnaturally with your head—as if looking through a periscope—to find out where AR objects actually are around you, instead of allowing your brain’s intuitive mapping sense to map the AR world as part of the real world.

That’s not to say that an AR headset with a 34 degree field of view can’t be useful, it’s just that it isn’t immersive, and therefore doesn’t deeply engage your natural perception, which means it isn’t well suited for the sort of intuitive human-computer-interaction that’s ideal for consumers and entertainment purposes.

“But Ben,” I hear some of you say, “What about Meta 2 AR headset and its 90 degree field of view?” Good question.

Yes, Meta 2 has the widest field of view of an AR headset that we’ve seen yet—and one that approaches that of today’s VR headsets—but it’s also bulky, with no obvious path to shrinking the optical system without sacrificing a significant portion of the field of view.

Meta 2’s optics are actually quite simple. The big ‘hat brim’ portion of the headset contains a smartphone-like display that’s facing the ground. The large plastic visor is partially silvered on the inside and reflects what’s on the display into the user’s eyes. Shrinking the headset would mean shrinking both the display and the visor, which would naturally result in a reduced field of view. Meta 2 might be great for developers—who are willing to put up with a bulky (and still tethered) headset for the sake of developing for future devices—but it’s going to take a different optical approach to hit that field of view in a consumer form-factor.

Meta 2’s optics are actually quite simple. The big ‘hat brim’ portion of the headset contains a smartphone-like display that’s facing the ground. The large plastic visor is partially silvered on the inside and reflects what’s on the display into the user’s eyes. Shrinking the headset would mean shrinking both the display and the visor, which would naturally result in a reduced field of view. Meta 2 might be great for developers—who are willing to put up with a bulky (and still tethered) headset for the sake of developing for future devices—but it’s going to take a different optical approach to hit that field of view in a consumer form-factor.

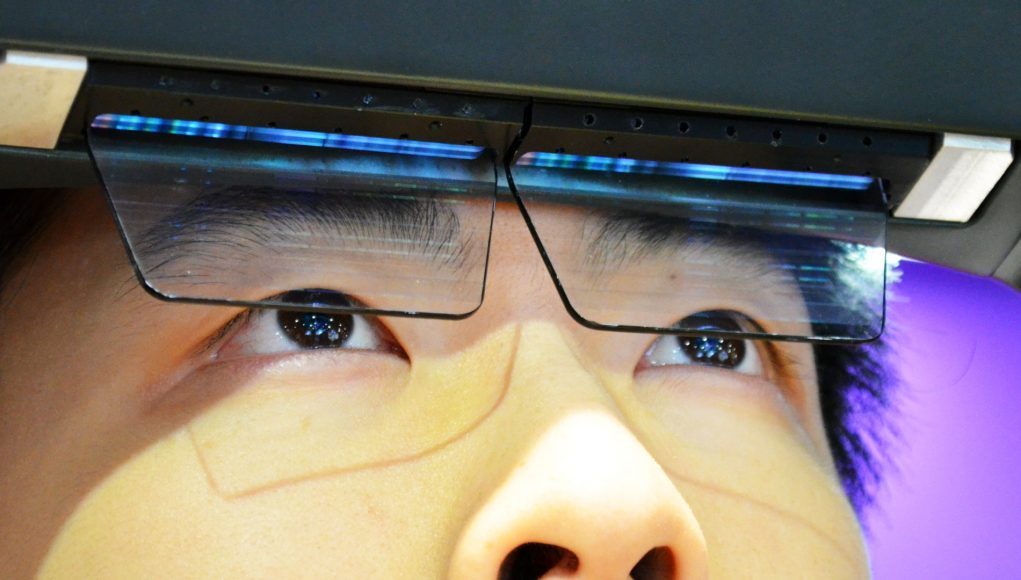

On that front, ODG is working with a similar but shrunken optical system, and landed at a 50 degree field of view on their top of the line, $1,800 R-9 AR glasses—and they’re only still just barely approaching a consumer-acceptable size. Taking a different optical approach (waveguide), Lumus has managed to squeeze a 55 degree field of view out of 2mm thick optics. But these are the very limits of AR’s field of view right now in form-factors that are close to properly portable.

A ~50 degree field of view isn’t half bad, but it’s still a far cry from today’s leading VR headsets which have around a 110 degree field of view—and even then consumers are still demanding more. It’s hard to put a single figure on what makes for a truly immersive field of view, however Oculus has in the past argued that you need at least 90 degrees to experience true Presence, and, at least anecdotally, the VR industry at large seems to concur.