Earlier this month at Apple’s annual WWDC conference, the company introduced their latest operating system, macOS Mojave (10.14). Due to roll out as a public beta later this month, and launching widely this Fall as a free update, Mojave brings further VR-specific optimizations to Apple’s Metal graphics API, which the company detailed in a session at WWDC.

Speaking on stage during a WWDC session titled ‘Metal for VR‘, Karol Gasiński, a member of the GPU Software Architecture Team at Apple, walked developers through the latest VR optimizations coming to MacOS Mojave (10.14) and offered an overview of how developers should think about optimizing their game’s graphics pipeline for VR. He also announced that Mojave will include “plug and play support” for the Vive Pro.

Gasiński revealed new features of the Metal graphics API, designed to make VR rendering more efficient.

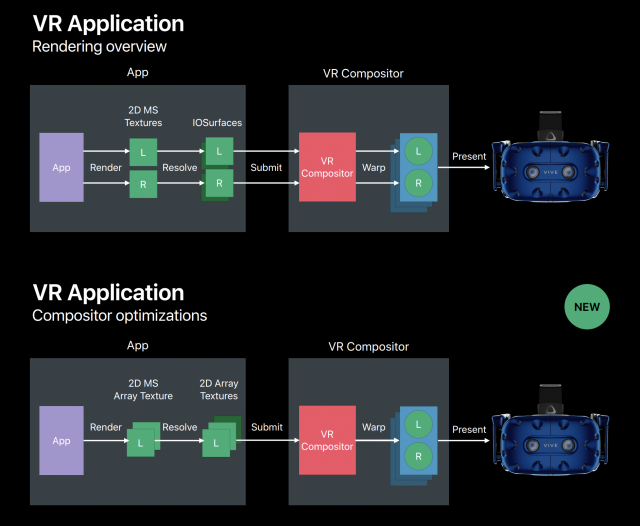

Previously, if developers wanted to advantage of sharp MSAA rendering, they’d need to use dedicated textures for each eye, which doubles the number of draw calls, render passes, and resolving steps. If a developer chooses not to use MSAA, Gasiński said, they’d have to pick a different rendering layout depending upon what they wanted to achieve.

Mojave will support a new Metal texture type called a 2D Multisample Array, which Gasiński says has “all the benefits of [the other rendering layouts] without any of the drawbacks.” It does this by offering separate control of rendering space, views count, and the anti-aliasing mode. This means developers can rely on a single rendering layout that can be adapted to any situation, and comes with the benefits of having a single draw, render, and resolve pass.

Gasiński further announced a new cross-process texture sharing feature, which allows for shareable Metal textures with complex structures (like those that are multi-sampled, stored with depth, or include mip-maps). Previously, only simple 2D textures could be shared between processes, he said. The new feature allows cross-process sharing of any Metal texture, as long as it stays within the context of a single GPU.

The changes also allow the VR Compositor—the process which adjusts the textures to be correctly displayed on a VR headset—to make its warp adjustment for both eyes in a single render pass. Overall, these changes simplify the rendering pipeline all the way from the app to the headset, as Gasiński visualizes below:

These changes will be immediately available to native VR app developers on Mojave, and are likely to eventually see support in development tools like Unity and Unreal Engine.

Gasiński spent the rest of the session detailing strategies by which VR app developers can optimize the inner workings of their apps to maximize rendering efficiency and improve performance. Apple Developers working on native VR apps should definitely consider watching the entire segment (starting at 12:22).

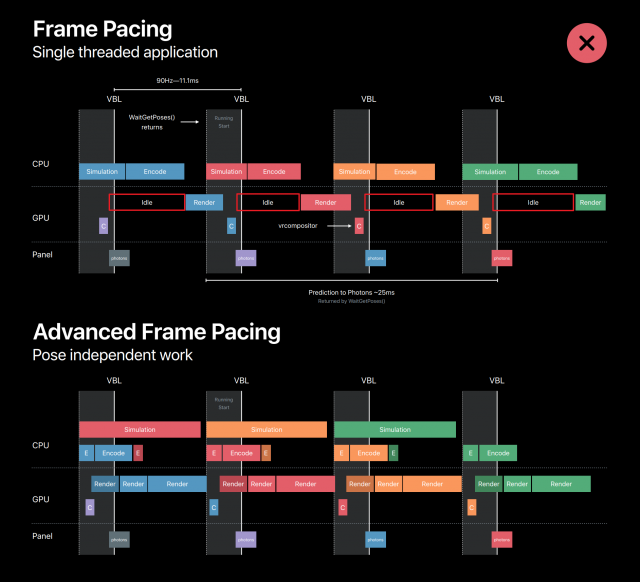

In short, Gasiński talked about advanced frame pacing techniques, saying that developers should use multi-threading, split command buffers, separate pose-dependent and pose-independent workloads, separate workloads by frequency of update (to benefit from multi-GPU configurations), and ensure that each GPU has a separate rendering thread to ensure asynchronous rendering.

The latter—a structure which benefits from multi-GPU configurations—is generally unique to Apple, considering the company’s growing support for external GPU and multi-GPU systems. Even in the case where a Macbook is using an eGPU for VR rendering, the Macbook’s internal GPU can contribute to the overall workload, if the app’s rendering pipeline is structured appropriately, even though it alone wouldn’t be enough to run a VR app on its own. Most PC-based VR applications today are built assuming all work will be done on a single GPU.

Overall, the techniques were aimed at minimizing idle GPU time, allowing as much work to be done as possible in the limited timeframe available for rendering.