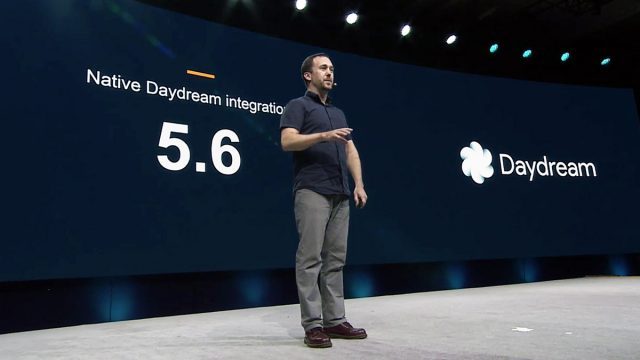

During the opening presentation at today’s Unity Vision Summit, Nathan Martz, Developer Platforms Product Manager at Google, took to the stage to talk about new tools that the company is releasing to help developers create high-performance apps for Daydream, Google’s high-end Android VR platform.

Having launched the first Daydream headset and phone in late 2016, Martz said the next step is to grow and scale the platform.

“This year we’re focused on scaling [Daydream and Tango] through the larger Android ecosystem. We know that as cool as these devices are—and as hard as they, frankly, are to make—ultimately people are going to buy them for the experiences that they enable,” he said.

Scaling means getting developers to build VR experience that are not only fun, but highlight optimized, and Google wants to make that as easy as possible. Unity 5.6 got native Daydream support back in March, allowing developers to build for the Android VR platform without downloading any custom builds or technical previews of the game engine.

But it doesn’t stop there; Google is continuing to invest in the Daydream development platform to help developers make great VR content, Martz said. The company is doing that with new tools that aim to make the lives of Daydream developers easier in several ways, from performance and profiling to templates of best practices for VR interaction. On stage (see the video heading this article) Martz talked about those new tools which are due to release soon to developers:

Daydream Render

VR already presents a high performance bar due to the need to achieve steady high framerates while rendering high resolutions in stereo. In order to achieve VR on mobile devices, applications need to be carefully optimized to deliver the required performance. For many developers, that’s meant relying on less computationally intensive ‘baked’ lighting and shadows that are ultimately displayed as static textures rather than real-time lighting.

Google says their new Daydream Renderer is a suite of highly optimized tools designed to tackle the challenging of enabling high-quality lighting to Daydream apps. With the tools, the company says that developers can achieve dynamic lighting and shadowing in stereo at 60 FPS on today’s flagship phones, bringing mobile VR another step closer to the sort of modern graphics expected on game consoles and PCs.

Instant Preview

With traditional mobile development, Martz says, developers need to write code on their computer and then spend a few minutes compiling it and transferring it to their Android device in order to do an on-device test. But if a few minutes stands between the time a change is made and the time the change can be tested, that’s less time available for iterating on those changes to get them just right.

Instant Preview will make the process of running on-device tests take “seconds not minutes,” Martz said. Allowing developers to rapidly iterate, leading to a better end-product. Instant Preview is achieved through changes to both the software on the computer and the hardware in phones, Martz says, and the latency is low enough that these instant changes can be seen and tested through a Daydream headset.

GAPID & PerfHUD

Getting down the nitty gritty of the hardware: a great VR app doesn’t just look great, it also needs to be able to operate within the performance and thermal confines of a smartphone (no easy feat). If the phone gets too hot, it will have to throttle down performance to keep from overheating, which can cause a drop in VR performance if not complete termination of the VR app to allow the phone to cool. With varying devices and environmental conditions, tuning mobile VR games to operate effectively for long durations can be especially challenging.

Getting down the nitty gritty of the hardware: a great VR app doesn’t just look great, it also needs to be able to operate within the performance and thermal confines of a smartphone (no easy feat). If the phone gets too hot, it will have to throttle down performance to keep from overheating, which can cause a drop in VR performance if not complete termination of the VR app to allow the phone to cool. With varying devices and environmental conditions, tuning mobile VR games to operate effectively for long durations can be especially challenging.

PerfHUD is designed to let developers see the hardware device’s vitals in and out of VR, says Martz, allowing devs to identify which areas of their games and apps are pushing the phone’s hardware too hard.

GAPID serves a similar function by allowing developers to do “deep GPU profiling and static analysis” right from their PC, providing insight into how the hardware and software is interacting to drive performance, and once again allowing developers to keep an eye out for problem areas that could be bringing performance down.

Daydream Elements

Even now with several major consumer headsets on the market for more than a year, from one VR app to the next there’s still huge variations in interaction design. VR users would definitely benefit from some consistency, much like how PC and smartphone apps use common methods of interaction.

To share what the company has learned about best practices for VR interaction design, Google plans to release Daydream Elements, which Martz described as a “modular, open-source application that contains focused examples of best practices.” Daydream Elements will offer up templates of commonly needed VR interactions—like manipulating and activating objects and selecting items on menus—and Google is encouraging developers to take these templates and drop them directly into their own apps as needed.

– – — – –

Martz says that the Daydream Renderer, Instant Preview, and Daydream Elements will launch this month, while PerfHUD and GAPID will come this summer.

Especially now with the launch of the Gear VR Controller, developing for Daydream is not so different from developing for Gear VR (also supported by Unity), as both run on Android. Google didn’t explicitly mention it, but it’s possible that some of these tools could be useful for Gear VR development as well. We’ve reached out to the company for clarification.