The Oculus Touch motion-tracked controllers have launched today after 8 months of the Rift solely supporting gamepad experiences. I wanted to take a moment to dig into some of the more subtle technical nuances when comparing the Oculus Rift & Touch with the HTC Vive, but also some of the larger VR ecosystem considerations to take into account. So today’s episode of the Voices of VR podcast is an op-ed analysis comparing the Touch controller and room-scale tracking technologies, as well as the overall content and developer ecosystems.

LISTEN TO THE VOICES OF VR PODCAST

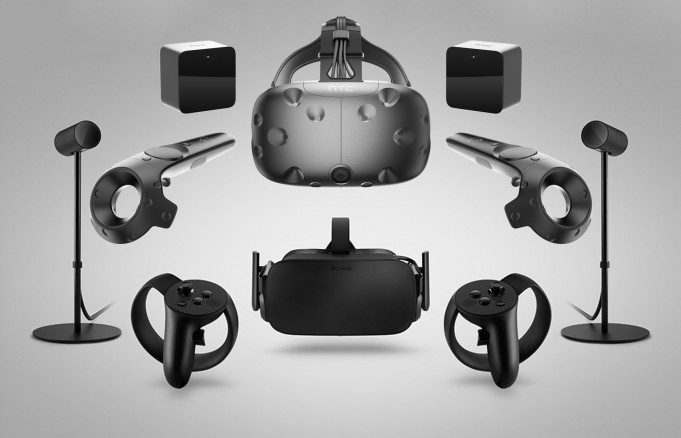

Audio PlayerIn terms of industrial design and ergonomics, I do prefer the Rift headset over the Vive. There has also been a lot of praise for the ergonomics of the Touch controllers, and I do agree that they a lot more comfortable than the Vive’s lighthouse wands. However, Oculus’ camera-based tracking system was not optimized to support roomscale VR experiences of the same size of the Vive, and TESTED has confirmed that a diagonally-configured, two-camera Oculus sensor setup is not as robust as the Vive’s Lighthouse beacons.

Oculus’ tracking system is mainly optimized for “standing” VR experiences that are specifically designed for their front-facing cameras. Robert McGregor has argued that Oculus wasn’t prepared for room-scale VR when they launched the Rift CV1, and that they had made a strategic bet that most people would be playing VR games sitting down in front of their computer. This strategic bet has hampered Oculus with their decision to go with shorter cables for both the Rift headset as well as their tracking sensors. These work great for forward-facing or standing 360 experiences on your desktop, but the cord management logistics and camera-based tracking volumes are highly suboptimal for achieving a robust roomscale experience.

This thread on the Oculus subreddit discusses more of the technical differences in tracking capabilities between the two systems. I think it’s important to remember in comparing the Rift with the Vive that a lot of the Touch launch content has been specifically optimized for front-facing and standing 360 experiences. I’ve had multiple developers tell me that Oculus has had developers change their experiences to avoid limitations in their tracking solutions such as picking things up off of the floor because the Touch can easily lose tracking when you reach for the ground. So to really push the limits of Oculus’ room-scale capabilities it should be compared to a variety of Vive room-scale games from Steam that allow for an equal comparison.

This is possible because Valve decided to take an open development philosophy in having their SteamVR SDK support both the HTC Vive and Oculus Rift hardware. This means that most games bought on SteamVR will have automatic support for the Rift, but that same game bought on Oculus Home won’t support being able to play it on the Vive (unless the game is adaptable with some unofficial hacks). The important point is that SteamVR’s support for Rift games enables people to test the same game on both platforms.

I unfortunately ran into a number of technical issues with my Oculus Touch set-up that prevented me from testing the limits of Oculus’ camera-based tracking, and so I’m going to have to reserve my final judgment on this matter; Road to VR published a video showing the extents of the two sensor front-facing Touch setup and their own review of Touch. But I think it’s important to remember some of these VR design nuances and limitations when comparing the two systems.

The Oculus Touch controllers do have more buttons that are available for gameplay and professional applications than on the initial Vive controllers. This means that the Oculus gaming content has easier access to these buttons for abstracted expressions of your will. A lot of the VR games on the Vive have avoided this level of button abstraction, and I believe that there’s a tradeoff in different levels of presence that these buttons can cultivate. The Vive focuses you more on embodied presence while the Oculus has a bit more capabilities for an abstracted, active presence.

It’ll be interesting to see in the long-run whether this abstraction advantage enables more rich gameplay on the Oculus platform or if players prefer the types of experiences that minimize the abstractions and maximize the number of intuitive movements. Having user interfaces with complicated button manipulation combinations could limit VR’s reach beyond gamers, and so it’s a risk if VR developers create experiences with too high of a learning curve. But having access to more buttons could also enable richer gameplay mechanics for some VR games.

The other big point that I wanted to make is that Valve opened up royalty-free Lighthouse tracking back in August, and so I expect to see a lot of new lighthouse peripherals launching at CES. There was not a similar announcement from Oculus at Oculus Connect 3, which solidifies my impression that the Vive is actively cultivating an open ecosystem while Oculus is going down a more closed, ‘walled garden’ route. They’re focusing more on vertically-integrated solutions with a highly-curated selection of exclusive games, which prioritizes the benefits to the end-consumer rather than supporting a diverse developer ecosystem. It’s enabled Oculus to launch with a robust line-up of games, but time will tell as to which ecosystem the VR developers will be investing their time in supporting in the future. For some developers, a minimum viable product is going to be to launch their game via Steam for the Vive as a first-class platform with Rift support as an automated afterthought.

At the moment owning an Oculus Rift headset with Touch arguably gives you access to more content if you include their exclusive content as well as the Vive content that’s automatically supported on Steam. However, there may be potential limitations of Oculus’ tracking technology in viably supporting some of the full room-scale experiences, which may hamper some people’s experiences of that Vive content. It’s also unclear as to whether or not Rift users will be able to fully utilize the new Lighthouse peripherals that are expected to launch next year.

From a VR design perspective, I believe that Oculus’ decision to wait eight months to launch Touch controllers as well as not natively supporting room-scale experiences has fractured the VR developer ecosystem into three distinct groups: Sit-down gamepad VR, Front-facing, and full roomscale VR. The lack of tracking parity between the Rift and the Vive has a created complicated and fractured ecosystem for VR developers to navigate since there are so many tradeoffs depending on which group is targeted.

While there is a lot of press consensus that the Oculus hardware with the Rift headset and Touch controllers enjoy an ergonomic advantage at the moment, I personally have deeper concerns about Oculus’ inferior tracking technology, the fractured ecosystem that’s being propagated through not making roomscale a first-class citizen, and a number of private developer frustrations with Oculus’ closed mindset. These are larger issues that make it more difficult for VR developers to easily support both hardware platforms, and ensure a healthy ecosystem of content development.

In the end, mainstream VR has a higher chance of success if VR developers can financially survive, and that the quality bar for content is high enough for consumers justify the time and money invested. Time will tell how the story plays out, but right now the Touch launch is a significant day for Rift consumers who have been waiting to fully step into the game since that promise was made in the original Oculus Kickstarter back in 2012.

Support Voices of VR

- Subscribe on iTunes

- Donate to the Voices of VR Podcast Patreon

Music: Fatality & Summer Trip