New instructional videos, supposedly leaked from a HoloLens development program, give us a glimpse at what every day interaction with Windows running in Augmented Reality looks like.

Microsoft first unveiled their augmented reality headset HoloLens at a special Windows 10 event last year. Since then, we’ve seen some impressive demonstrations of the technology, including live stage examples of how augmented reality technology might one day change our lives.

But, if Microsoft ever decides to sell HoloLens to the public, how will users navigate and interact with Windows 10 Holographic, the AR focused flavour of Microsoft’s operating system designed for HoloLens?

These leaked tutorial videos are for HoloLens application Actiongram, a recently revealed drag and drop holographic ‘sticker’ app, which lets you drop 3D models into your real world surroundings. Whilst the app itself is intriguingly useless, the videos walk users through the basics of how to launch Actiongram from the Windows Holographic equivalent of a start menu and generally interact with the user interface.

It’s the most we’ve yet seen of Windows Holographic in action, running real-time inside an augmented reality session and it gives you a glimpse at how Microsoft envisions human interaction with Windows working in lieu of a keyboard or mouse.

The HoloLens’ primary interaction method is via human hand gestures, interpreted by the headset. Microsoft have had to invent a new language in order for users to interact with Windows with just their hands, and you can see (and hear reference to) a few in the videos. But, if you’re wondering what “Bloom to the shell” and “Airtap” mean, Microsoft’s Windows Holographic instruction pages are now online.

According to the documentation, a Bloom gesture requires a user to “hold out your hand, palm up, with your fingertips together. Then open your hand.” The gesture can be seen in part in the image below.

The Airtap gesture has had the most airing in public demonstrations and is mostly self explanatory. The user extends their index finger and thumb in front of them and brings their index finger together (kind of like the ‘pinch’ gesture on mobile phones) – this seems to be most analogous to the left mouse click action in standard windows. The gesture can then be extended by holding the thumb and forefinger together and moving your hand – useful for dragging objects, as demonstrated in the video below.

Other gesture such as a prolonged extension of the users index finger to bring up object specific, context menus – as seen in the above video where the user resizes the ‘hologram’.

These gestures and actions are combined with the user’s gaze to act as a mouse point in certain scenarios. For example, when launching an application initially, you’re required by Windows Holographic to ‘place’ the application somewhere relative to your physical space. This can seemingly be anywhere; on walls or ceilings or even furniture.

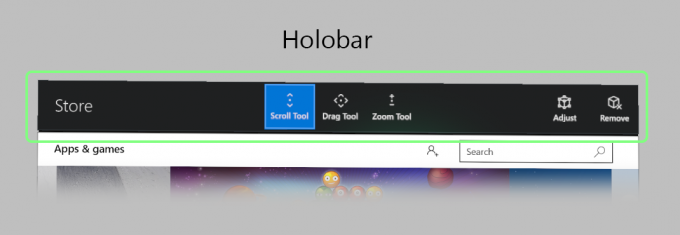

Also watch in the above videos for what Microsoft calls the ‘Holobar’, a context sensitive set of controls for the 2D representation of HoloLens apps which allows you to control relative location, shape and size of the ‘window’.

The subject of how we’re expected to interact with Augmented Realities is almost as interesting as how we display them and seemingly just as challenging. This is early days for Microsoft’s HoloLens, but it’s interesting to watch it and companies like Meta, begin to define the language of interactivity for this new form of interface for computing.

The ‘Development Edition’ of Microsoft’s HoloLens is to be made available to those who sign up to the HoloLens development program and are willing to lay down a cool $3000 for the privilege. These units are due to begin shipping March 30th.